We started this series nineteen articles ago with electrons moving through copper wire. We traced voltage through semiconductors, built transistors into processors, stacked processors into data centers, and connected them with fiber optic cable. We trained neural networks, scaled language models across thousands of GPUs, and in the last few articles examined the security of the systems we built and the encryption that protects them.

This final article is about what happens when AI stops waiting for instructions.

WHAT MAKES AN AGENT

The word agent gets thrown around loosely. Every chatbot with a plugin gets called an agent now. So let me draw a line.

A chatbot waits for your message, generates a response, and stops. It is reactive: you type, it replies, you type again.

A tool is even simpler: you give it input, it gives you output, done. A calculator is a tool. Neither one decides what to do next.

An agent is different. An agent perceives its environment, reasons about what it observes, and takes actions to accomplish a goal. Then it observes the results of those actions and decides what to do next.

This creates a loop: perceive, reason, act, observe, repeat. The loop continues until the agent decides the goal is met or determines it cannot proceed.

That loop is the whole distinction. A chatbot generates text. An agent takes actions in the world.

Ever tried to book a complicated multi-city trip? You search flights, compare prices, check hotel availability, cross-reference with your calendar, and iterate until everything lines up. An AI agent does something similar: it breaks the goal into steps, executes each one using whatever tools are available, and adjusts based on results. The difference is that nobody is typing prompts between each step.

HOW AGENTS WORK

The perception-reasoning-action loop sounds simple, but making it work requires three capabilities that basic language models do not have out of the box.

Tool Use

A language model alone can only produce text. An agent needs to interact with the real world, and it does this through tools: functions the agent can call to search the web, read a file, execute code, send an email, query a database, or click a button on a screen.

When an agent encounters a task requiring external information, it generates a structured tool call instead of plain text. The system executes the tool and feeds the result back to the model. The model then reasons about the result and decides the next step.

This is worth pausing on. The model itself never does anything; it writes instructions and the surrounding system carries them out. The intelligence is in deciding which tool to call, with what arguments, and in what order.

Memory

A chatbot forgets everything between sessions. An agent needs to remember. Short-term memory (usually the conversation context) lets the agent track what it has done and what it still needs to do. Long-term memory (stored in databases or files) lets the agent recall information across sessions.

Without memory, an agent solving a multi-step problem would forget step one by the time it reaches step five. Memory is what turns a sequence of independent decisions into a coherent plan unfolding over time.

Planning

The hardest part. Given a goal like deploy this feature to production, an agent needs to decompose that into subtasks: write the code, run the tests, fix any failures, create a pull request, wait for review, merge, deploy. Each subtask might itself decompose further.

Current agents use several planning strategies. ReAct (Reason + Act) interleaves thinking and action: the model writes out its reasoning, takes an action, observes the result, reasons again. Chain-of-thought prompting asks the model to think step by step before acting. Tree-of-thought explores multiple possible plans and selects the best one.

None of these are perfect. Planning remains the weakest link in most agent systems today, and it is where most failures originate. An agent that can use tools flawlessly but plans poorly will confidently execute the wrong sequence of steps.

THE AGENTS YOU CAN USE TODAY

This is not hypothetical. Autonomous AI agents are shipping as products right now.

Devin

Cognition Labs launched Devin in early 2024 as what they called the first AI software engineer. Given a task description, Devin can plan an approach, write code across multiple files, run tests, and debug failures. It operates in its own development environment with a terminal, browser, and code editor, making decisions at each step without human prompting.

AutoGPT

AutoGPT appeared in March 2023 and demonstrated something that caught the entire industry off guard: an AI system that could recursively prompt itself. You give it a high-level goal, and it creates its own task list, executes tasks one by one, evaluates results, and generates new tasks as needed. It was rough, expensive, and often went in circles, but it proved the concept.

Claude Code

Anthropic’s Claude Code is a command-line agent that operates directly in your development environment. It reads your codebase, understands the project structure, writes and edits files, runs commands, and manages git operations. What makes it interesting is the permission model: it asks before taking actions that could have real consequences, creating a human-in-the-loop safety layer.

OpenAI Operator

OpenAI’s Operator, released in January 2025, takes a different approach. Instead of working through APIs and code, it controls a web browser visually, the same way a person would. It reads screens, clicks buttons, fills forms, and navigates websites. The computer-use paradigm means it can interact with any website without needing custom integrations.

Manus

Manus, launched in March 2025, bills itself as a general-purpose AI agent. It can research topics, create documents, build websites, and execute multi-step workflows that span different tools and platforms. It represents the trend toward agents that attempt to handle whatever you throw at them rather than specializing in a single domain.

What all these share is the loop. They perceive (read code, see screens, receive data), reason (decide what to do next), and act (write code, click buttons, execute commands). The specific tools and interfaces differ, but the architecture is the same.

WHEN SOFTWARE STARTS TO LOOK LIKE A PERSON

Here is where things get philosophically uncomfortable.

A traditional program does exactly what you tell it. Nobody worries about whether a spreadsheet has rights. But when software perceives its environment, reasons about goals, and takes actions with real consequences, some old assumptions start to creak.

John Searle posed the Chinese Room argument in 1980. Imagine a person in a room who receives Chinese characters through a slot, follows a rulebook to produce appropriate responses, and passes them back out. To an outside observer, the room speaks Chinese.

But the person inside understands nothing. Searle argued that symbol manipulation, no matter how sophisticated, is not understanding.

Modern AI agents are a much larger room with a much better rulebook. They process tokens, match patterns, and generate responses that are often indistinguishable from human reasoning. My take is that Searle is technically right and practically losing the argument, because the gap between appears to understand and actually understands is getting harder to detect and increasingly irrelevant to the question of what we do about it.

Functionalism offers a different lens. Functionalists define mental states by what they do, not what composes them. Pain is pain not because of specific neurons firing but because of the functional role it plays: it makes you withdraw, avoid, and learn. If an AI system plays the same functional roles, does it matter that it runs on silicon instead of neurons?

Nobody credible is arguing that today’s AI agents are sentient. The more immediate question is about moral status: at what point does an entity that perceives, reasons, acts, and adapts deserve some form of consideration?

The EU AI Act, which came into force in August 2024, sidesteps this entirely. It treats AI systems as products, not entities. The European Parliament explicitly rejected a 2017 proposal to create electronic personhood for autonomous systems. For now, AI agents are tools, full stop.

But legal categories have a habit of lagging behind reality. If you want to follow this thread, the philosophy of consciousness and the hard problem (why does subjective experience exist at all?) are rabbit holes worth entering. David Chalmers’ work is a good starting point.

WHO IS RESPONSIBLE WHEN AN AGENT ACTS

Suppose an AI agent managing your investment portfolio makes a trade that loses you fifty thousand dollars. Who do you sue?

This is not a thought experiment. Autonomous trading systems have been making real financial decisions for years. AI agents are now booking travel, sending emails, modifying code in production systems, and interacting with other services on behalf of users. When something goes wrong, the liability question is genuinely unresolved.

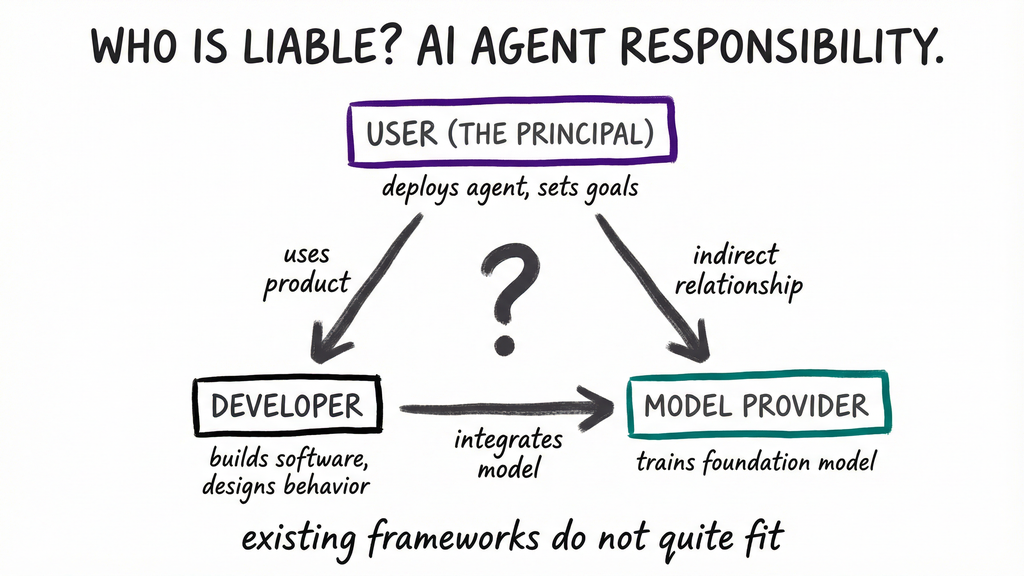

Three parties are in the frame. The user (the principal) who deployed the agent and gave it a goal. The developer who built the agent software and designed its behavior. And the model provider who trained the foundation model the agent reasons with.

Traditional product liability would hold the manufacturer responsible: if the software is defective, the company that made it pays. This works when behavior is predictable; a car’s brakes fail, the manufacturer is liable. But an AI agent’s behavior is not fully predictable, and actions emerge from a model with billions of parameters that nobody fully understands.

Agency law offers another framework. When a human agent (say, a stockbroker) acts on your behalf and makes a mistake, the principal is usually liable because the agent acted under your authority. If you tell an agent manage my calendar and it deletes a meeting, are you responsible because you delegated?

The honest answer is that existing legal frameworks do not quite fit. The EU AI Act assigns obligations based on risk categories but does not address the liability chain for autonomous agent decisions in detail. The US has no federal AI liability framework at all.

If the legal dimension interests you, comparative AI regulation across jurisdictions is worth tracking. The EU, US, China, and UK are all taking different approaches, and the divergence is growing. Algorithmic accountability, where regulators require companies to explain automated decisions, connects directly to the agent liability question.

WHERE THE LOOP CLOSES

Multi-agent systems take the single-agent concept and multiply it. Instead of one agent working alone, multiple agents collaborate: one researches, another writes, a third reviews, a fourth publishes. They communicate through shared memory or message passing and can work in parallel on different parts of a task.

This is already happening in production. Development teams use agent pipelines where one agent writes code, another writes tests, and a third reviews both. The pattern scales in ways that single agents cannot.

The risks scale too. A single agent making a mistake is manageable. A swarm of agents amplifying each other’s errors without human oversight keeps AI safety researchers up at night. If AI safety and alignment interest you, the technical work on agent guardrails, sandboxing, and capability control is some of the most consequential research in computer science right now.

We started with voltage. We ended with software that makes its own decisions. Every layer in between, the semiconductors, the processors, the networks, the software, the machine learning, the language models, was a prerequisite for this moment. Agents are where computing stops being a tool you operate and starts being a system you supervise.

That transition, from operator to supervisor, is the real story of this series.

T.

References

-

ReAct: Synergizing Reasoning and Acting in Language Models - Yao et al. 2022, the ReAct framework interleaving reasoning with tool use

-

EU Artificial Intelligence Act - Full text of the EU AI Act (entered into force August 2024), first major AI regulatory framework

-

Minds, Brains, and Programs - Searle’s 1980 Chinese Room argument, central to AI understanding debates

-

A Survey on Large Language Model based Autonomous Agents - Wang et al. 2023 survey of agent architectures, planning, tool use, and memory

-

The Conscious Mind - Chalmers on consciousness and the hard problem, relevant to AI personhood