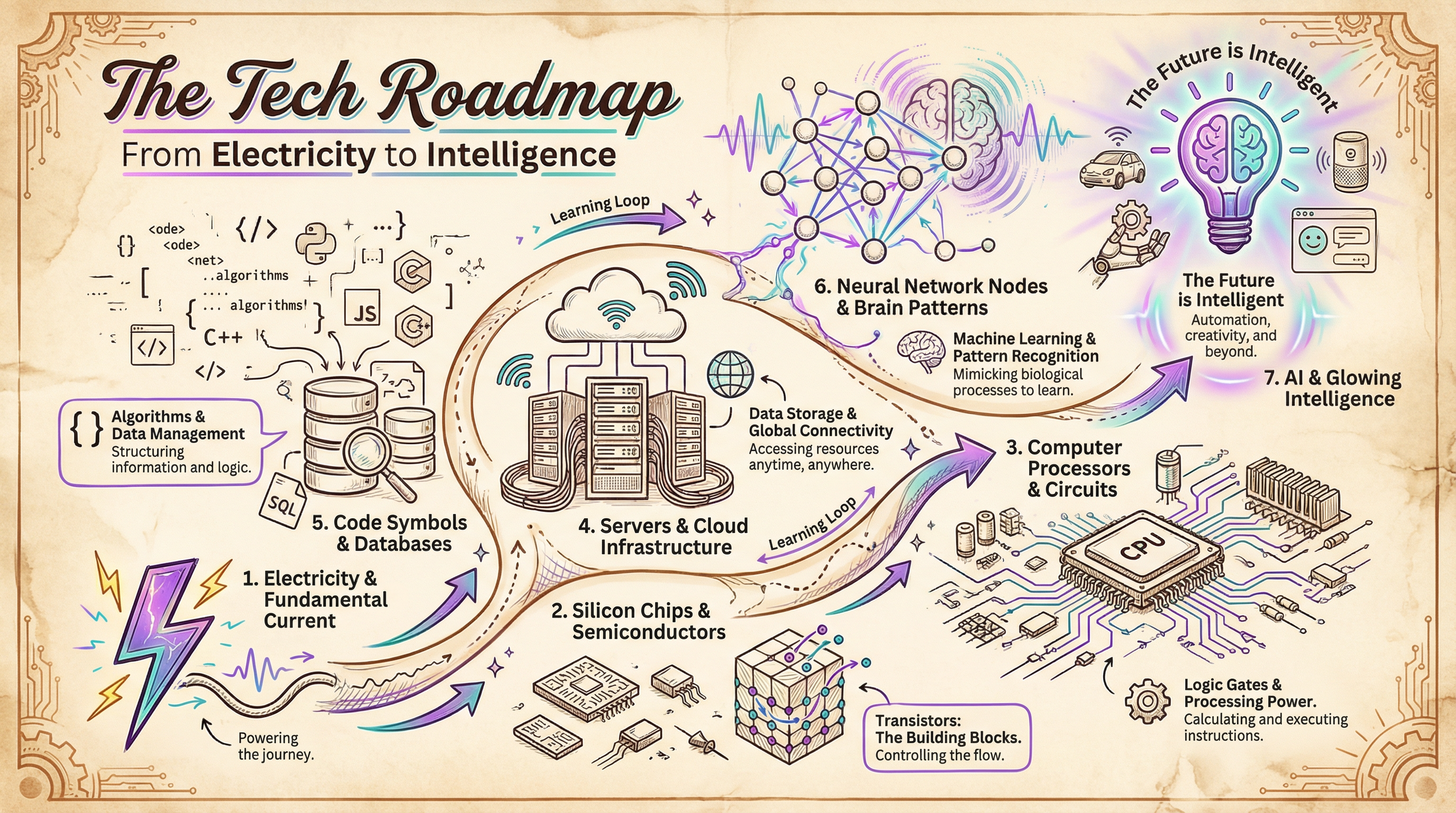

This is a 20-article series covering technology from the ground up. It starts with electricity, moves through silicon, processors, and data centers, climbs up through software, algorithms, and databases, and ends with machine learning, neural networks, large language models, and where all of it is heading.

WHY START FROM ELECTRICITY

Because AI is not magic. It is electricity moving through silicon in very specific patterns. Everything between a wall outlet and ChatGPT is layers of abstraction, each one building on the last.

Most explanations of AI start in the middle. They assume you already know what a GPU does, how data centers work, or what “training a model” means. This series does not assume any of that. It starts at the bottom and builds up, one layer at a time.

When you understand the layers, you understand the constraints. When you understand the constraints, you can tell the difference between what is real and what is hype. Honestly, that distinction matters more now than it ever has.

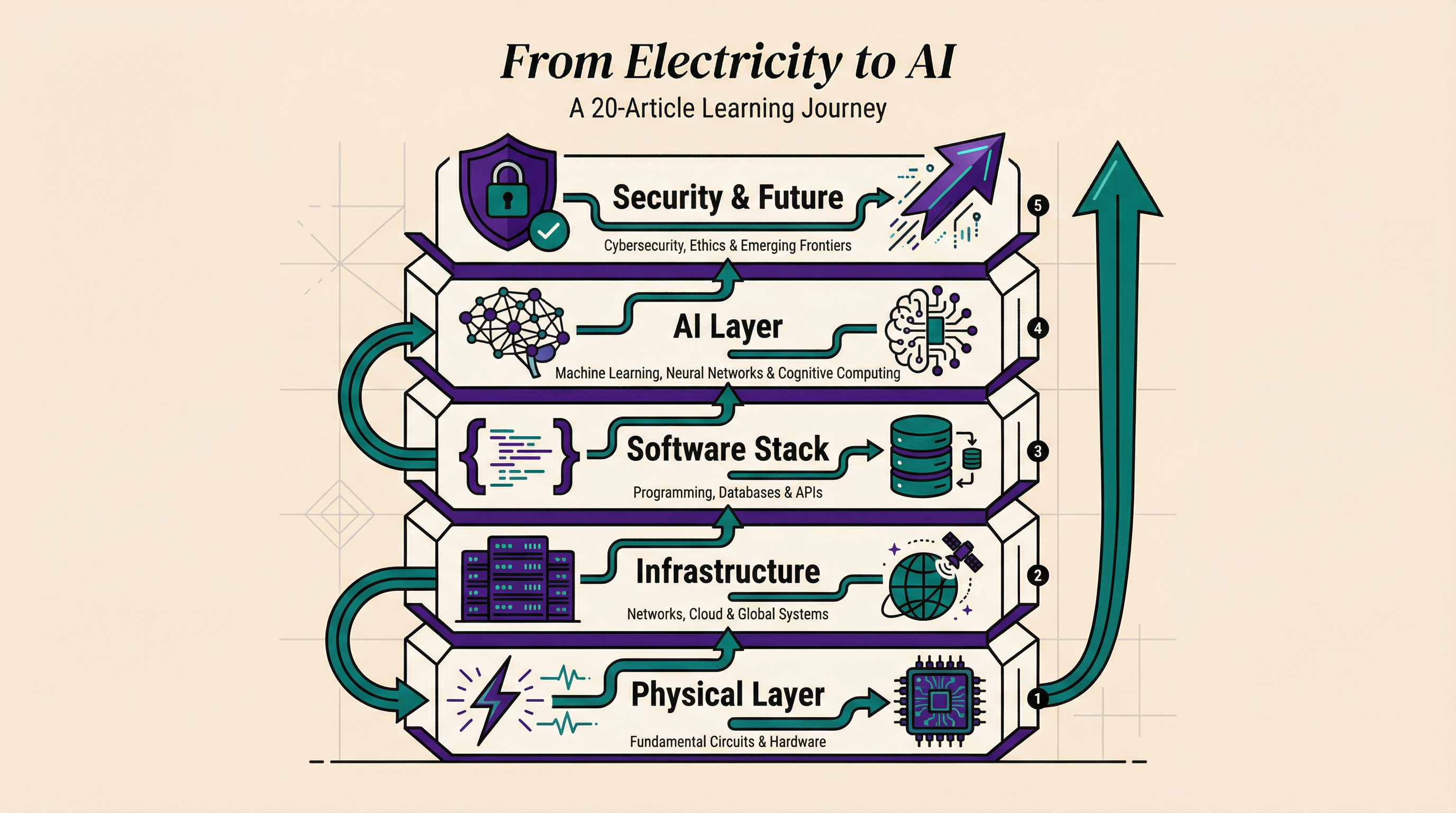

WHAT THE SERIES COVERS

The series is organized into five layers, each one building on the previous.

The Physical Layer. What electricity actually is, how semiconductors turned sand into the most important material in history, and why the difference between a CPU and a GPU matters for everything that follows. If you have ever wondered what silicon actually does inside a chip, this is where you find out.

The Infrastructure. What “the cloud” looks like in real life (spoiler: it is not a cloud), how much energy the digital world consumes, and what actually happens between typing a URL and seeing a webpage. The energy numbers alone are worth the read.

The Software Stack. What happens between writing code and a computer doing something useful, why some programs finish in milliseconds while others take hours, and how your data gets stored when you hit save.

The Intelligence Layer. How computers learn from data without explicit rules, what a neural network is and how it processes information, what happens inside an LLM when you send it a prompt, and what it takes to train and serve a model to billions of users. This is where things get interesting. My take: once you see how the training pipeline works end to end, the “AI is just statistics” argument falls apart.

Security and the Future. How hackers actually break into systems, how encryption keeps communication private, what happens when AI can act on its own, and based on everything in the series, where technology is most likely heading next.

WHAT YOU WILL GET (AND WHAT YOU WILL NOT)

This series will not make you an expert in any single topic. It will not teach you to build a neural network from scratch or design a data center cooling system.

What it will do is give you a map. A mental model of how everything connects, from copper wire to artificial intelligence. So when someone says “we need more compute” or “this model has 175 billion parameters,” you know what that actually means. Not just the words. The physical reality behind them, the electricity, the silicon, the infrastructure, all of it.

Is that worth 20 articles? I think so. But I am biased.

THE COMPLETE ROADMAP

- ✅ The Tech Roadmap: From Electricity to AI (you are here)

- ✅ How Electricity Actually Works

- ✅ Semiconductors: The Material That Changed Everything

- ✅ Processors and GPUs: How Chips Think

- ✅ Data Centers: Where the Cloud Lives

- ✅ Power and the Internet: The Energy Cost of Everything Digital

- ✅ How the Internet Actually Works

- ✅ Software Fundamentals: What Code Actually Does

- ✅ Algorithms: How Computers Solve Problems

- ✅ Databases and Data: How Systems Store Information

- ✅ Machine Learning: How Computers Learn from Data

- ✅ Neural Networks: How AI Mimics the Brain

- ✅ LLMs: How ChatGPT Actually Works

- ✅ AI Training: How Models Get Smart

- ✅ AI Inference and Scaling: From Training to Serving Billions

- ✅ The Frontier Labs: Who Is Building AGI

- ✅ Hacking: How Cyberattacks Actually Work

- ✅ Encryption and Trust: How Privacy Works Online

- ✅ AI Agents: Software That Acts on Its Own

- ✅ What Comes Next: The Near Future of Technology

T.

References

- How Does the Internet Work? - Stanford - Stanford’s foundational whitepaper on internet infrastructure, referenced in the networking articles of this series

- Attention Is All You Need - Vaswani et al. - The 2017 paper that introduced the Transformer architecture, the foundation for every LLM discussed in this series

- AI Index Report 2025 - Stanford HAI - Annual benchmark data on AI capabilities, costs, and adoption that informed the training and inference articles