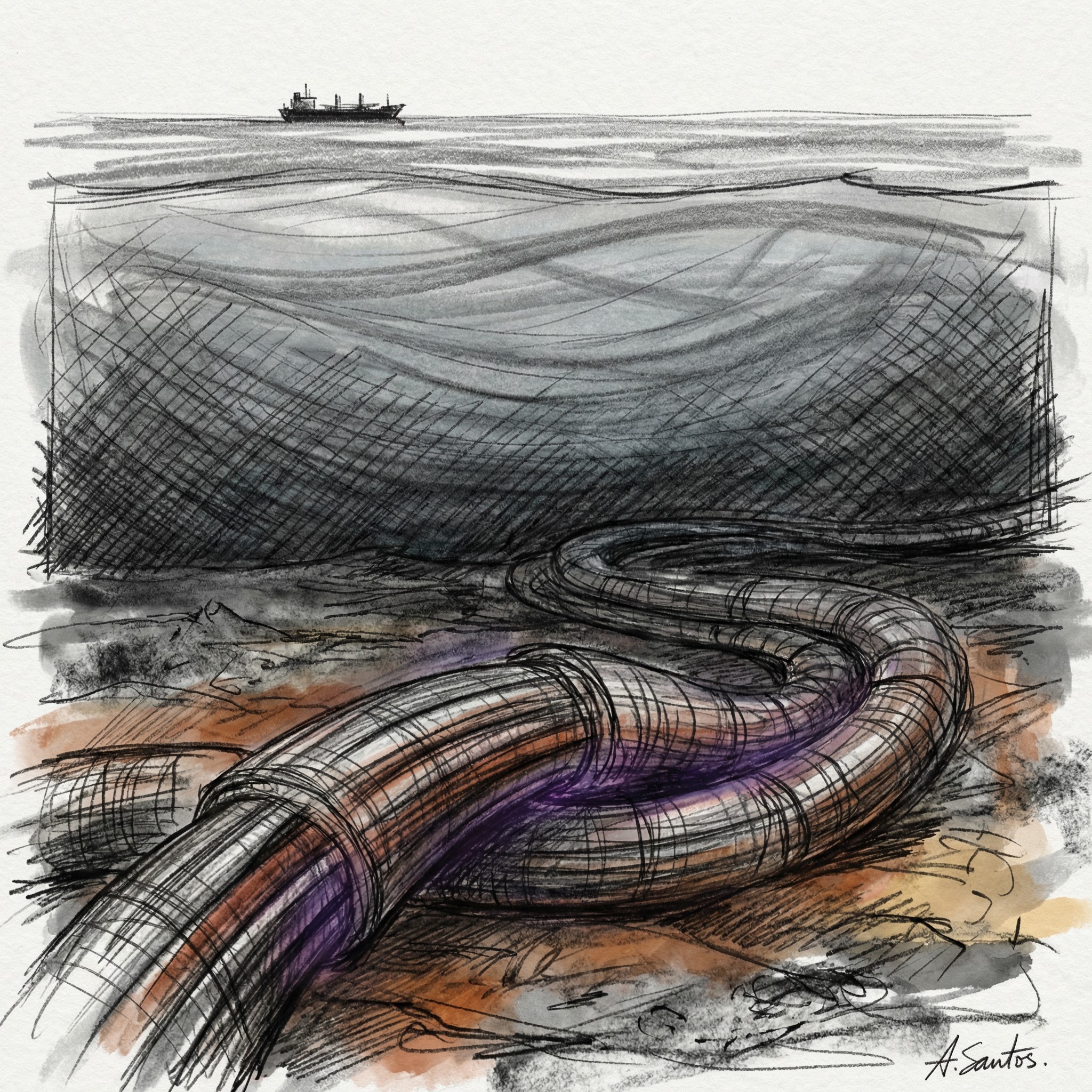

There are roughly 1.48 million kilometers of fiber optic cable on the ocean floor. That is enough to circle the Earth thirty-seven times. Every time you load a webpage, send an email, or run an AI query, your data almost certainly travels through some portion of that cable.

Not satellites. Not radio waves. Actual glass fibers, thinner than a human hair, resting on the seafloor in darkness.

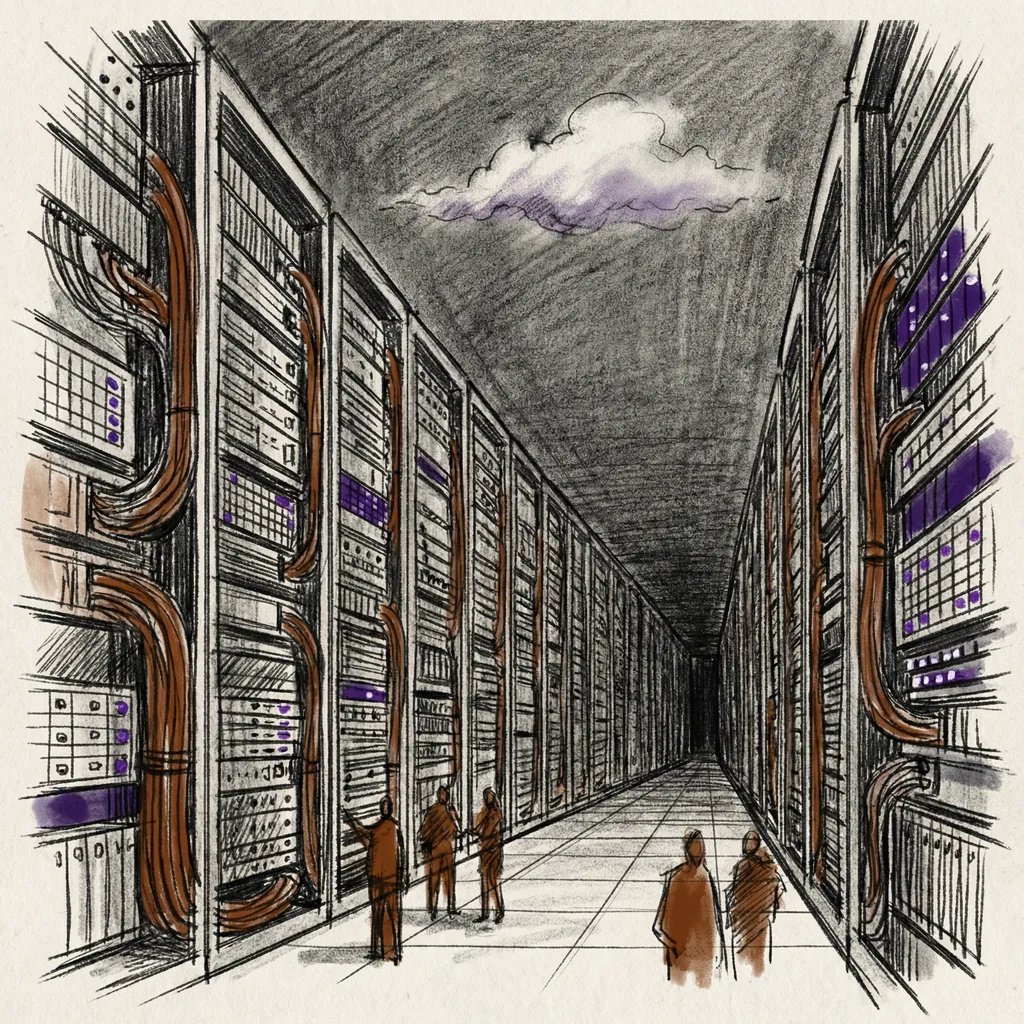

Most people picture the internet as something wireless and cloud-like. The reality is the opposite: an intensely physical infrastructure of cables, hardware, and buildings, connected by protocols that make it all look seamless. In the previous articles in this series, we traced electricity through silicon into processors, then into data centers. Now we zoom out to the network layer connecting those data centers to each other and to every device on Earth.

The Physical Internet

On land, backbone traffic travels through fiber optic cables strung along highways, buried underground, and threaded through city conduit. A single fiber strand carries data as pulses of light at roughly 200,000 kilometers per second. Modern cable bundles contain hundreds of individual strands, each carrying multiple data channels simultaneously through wavelength-division multiplexing.

Intercontinental traffic crosses the ocean. Over 600 submarine cable systems span the seafloor, reinforced with steel wire near coastlines where anchors pose a hazard, narrower in deep water where undersea earthquakes are the main threat. Google’s Grace Hopper cable, connecting the US and UK, handles 350 terabits per second across 16 fiber pairs. One terabit per second is enough to stream roughly 250,000 HD video feeds simultaneously.

Where do these cables connect? At Internet Exchange Points, or IXPs, physical buildings where different networks meet and hand traffic to each other. Instead of routing a packet from a European ISP through an American intermediary to reach a European content provider, both networks can meet at a Frankfurt IXP and exchange traffic directly. DE-CIX in Frankfurt peaked at 17 terabits per second in April 2024. AMS-IX in Amsterdam reached 14 terabits per second later that year. These are measured peak traffic figures, not theoretical capacity.

How Packets Find Their Way

Every device on the internet needs an address. IPv4 provides about 4.3 billion, which we exhausted in 2011. IPv6 uses 128-bit addresses providing 340 undecillion unique addresses, enough for every atom on Earth’s surface to have its own.

Data does not travel as a continuous stream. The network breaks it into packets, typically around 1,500 bytes each, labeled with source and destination addresses. Packets travel independently, potentially along different routes, and the receiving end reassembles them in order.

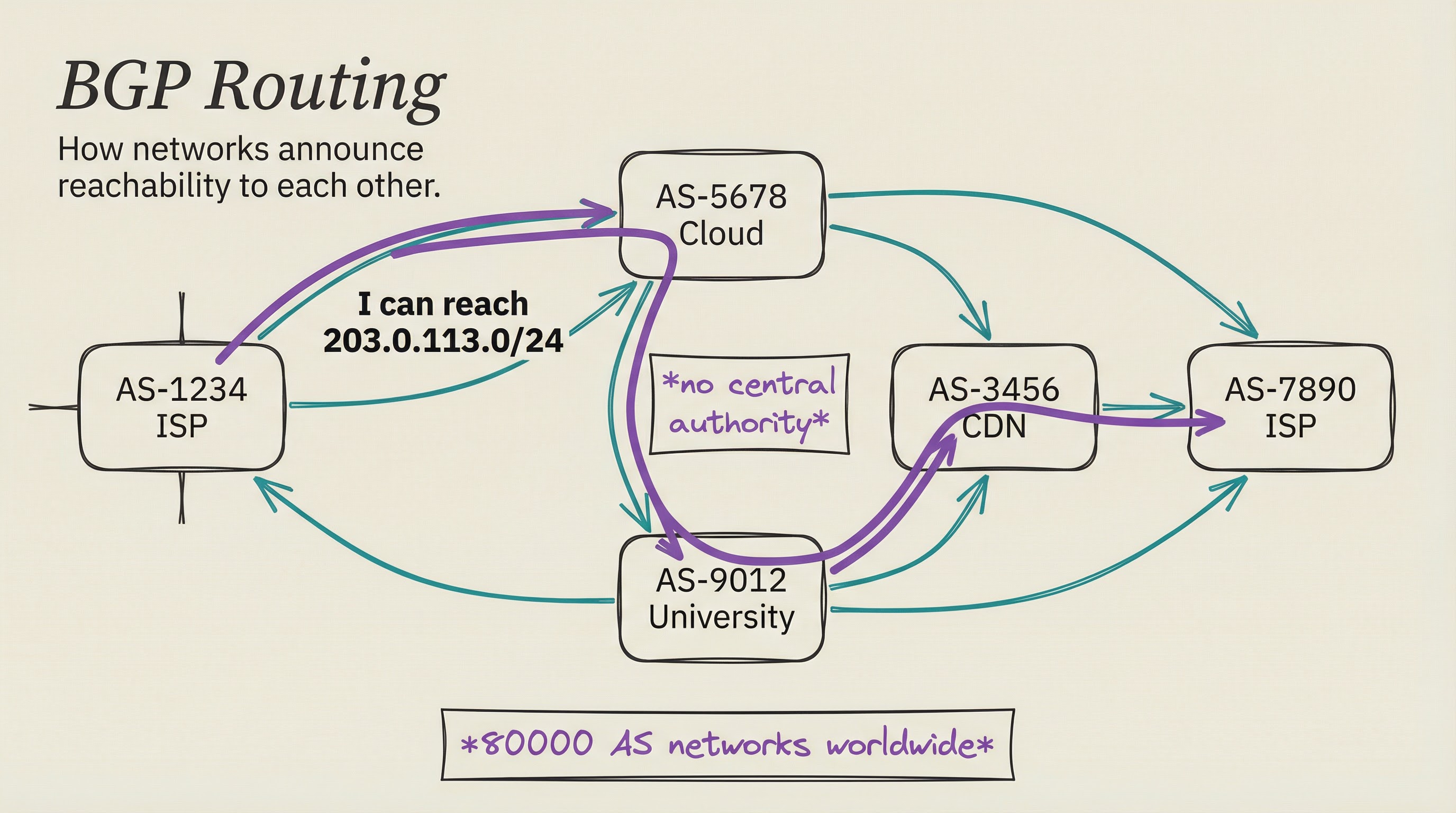

The internet has no central routing authority. Roughly 80,000 Autonomous Systems, the networks operated by ISPs, cloud providers, universities, and corporations, announce reachability to each other through Border Gateway Protocol. Each system tells its neighbors which address blocks it can reach. Neighbors propagate that information onward, producing a globally distributed routing table.

BGP was designed for trust, not adversarial conditions, and the internet periodically pays the price. In January 2024, an attacker hijacked Orange Spain’s routing records and took their network offline for hours. In July 2024, a BGP incident disrupted 300 networks across 70 countries. The resilience story is real, though: when a submarine cable breaks, BGP routing discovers alternate paths and traffic shifts within minutes.

The Protocol Stack

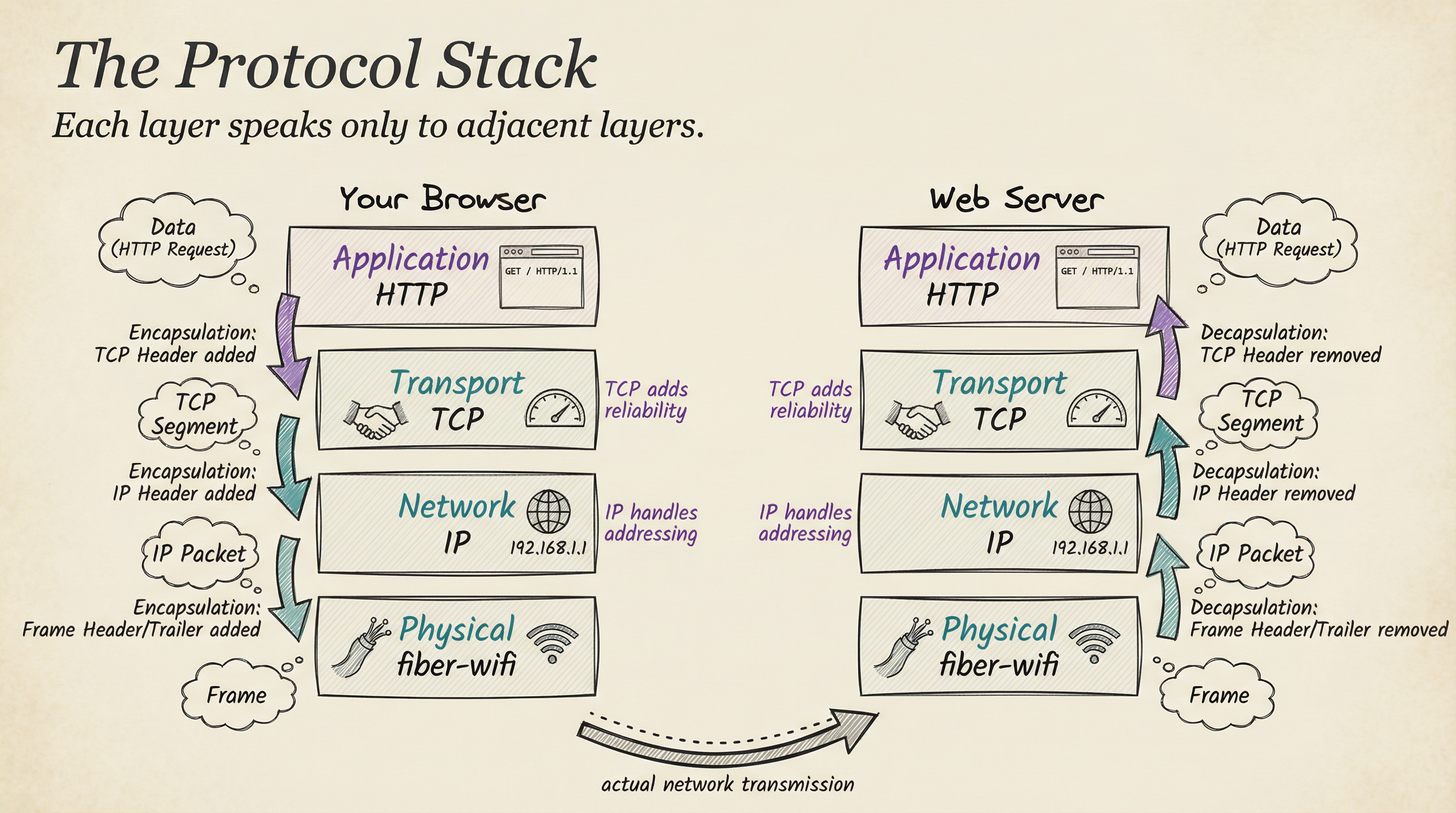

IP handles addressing and routing. TCP, the Transmission Control Protocol, handles reliability. The network is inherently lossy: routers drop packets, transit can corrupt them, cables fail. TCP imposes order on this chaos.

TCP establishes connections through a three-way handshake. Your device sends a SYN packet: “I want to connect.” The server responds with SYN-ACK: “Acknowledged.” Your device confirms with ACK: “Connection established.” From New York to Virginia, that exchange takes roughly 15 milliseconds before a single byte of application data flows.

The receiver acknowledges every packet. If an acknowledgment does not arrive within a timeout window, TCP retransmits. If packets arrive out of sequence, TCP buffers and reorders them. The application layer sees a clean, reliable byte stream regardless of what the network actually delivered.

UDP, the other major transport protocol, skips all of this. It sends packets and moves on: no acknowledgment, no reordering, no retransmission. Video calls and online gaming use UDP because a dropped packet is better handled by the application than by waiting for a retransmission that arrives too late to be useful.

The internet’s design is layered: physical cables carry signals, the data link layer (Ethernet, Wi-Fi) handles directly connected devices, IP handles cross-network routing, TCP/UDP handle transport, and application protocols like HTTP and DNS sit on top. Each layer speaks only to its neighbors. This is why you can run HTTP over TCP over IPv4 over Wi-Fi or fiber without changing any other layer.

The Phone Book Problem

The network routes by IP address, but humans use names. DNS, the Domain Name System, translates between them.

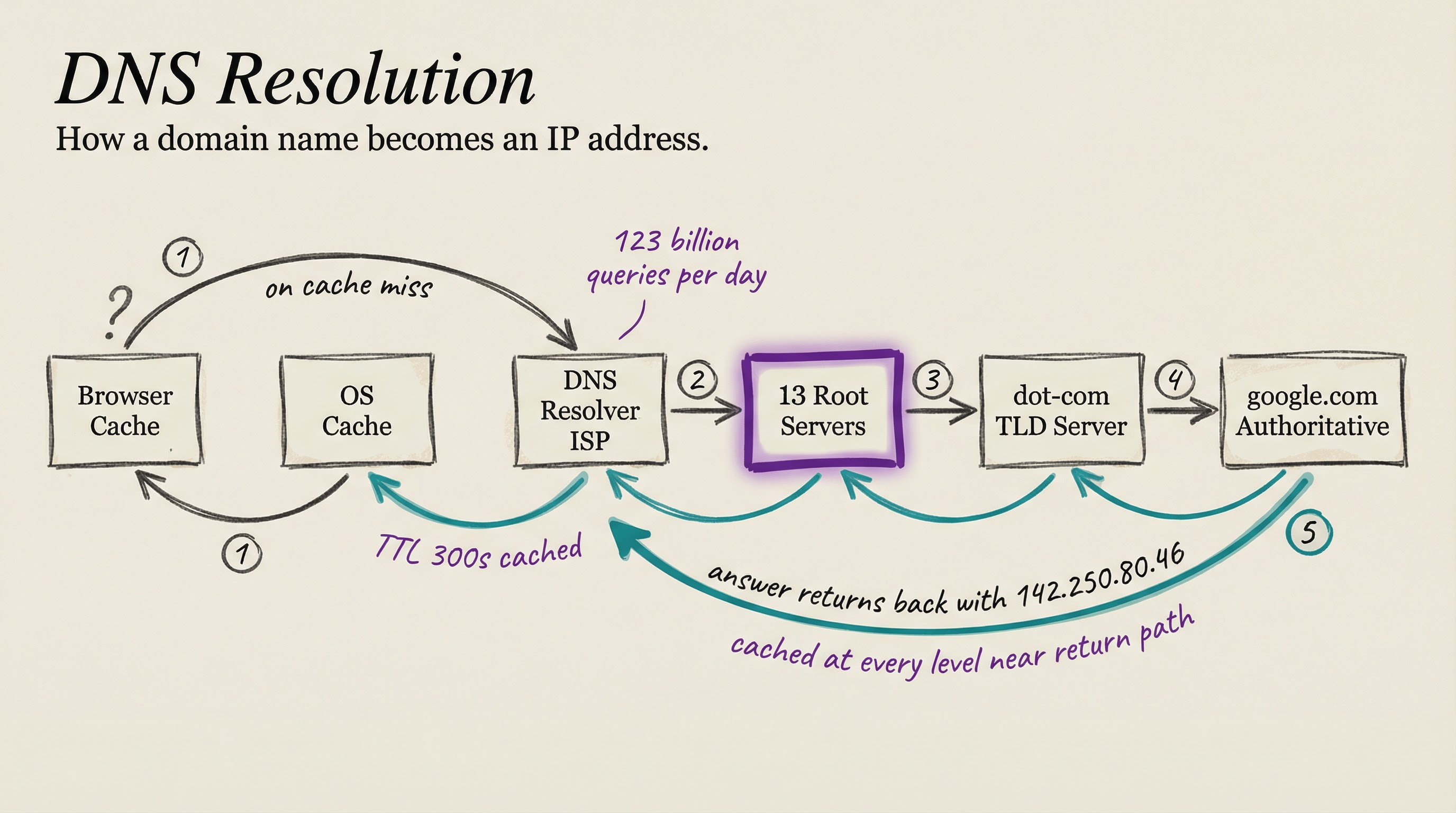

When you type a domain into your browser, the first stop is local: your browser checks its own cache, then your operating system’s cache. If neither has the answer, the query goes to a DNS resolver, typically one operated by your ISP or a public resolver like Cloudflare’s or Google’s. The resolver walks the hierarchy: one of 13 root name server clusters, then the TLD server for .com, then the authoritative server for the specific domain. The actual IP address comes back, cached at every level along the way.

The Vercara UltraDNS platform alone processes approximately 123 billion authoritative DNS queries per day, and that covers only one major operator. When DNS breaks at any level, entire categories of internet functionality break with it.

The Last Mile

Backbone fiber operates at terabits per second. The connection from that backbone to your home, the “last mile,” is often the bottleneck. Fiber to the home delivers 1 to 10 gigabits symmetrically. Cable internet reaches 1-2 gigabits downstream but much less upstream, a design legacy from when consumers mostly received content. DSL tops out around 100 megabits over copper. Fixed wireless via 5G delivers 100 to 1,000 megabits depending on tower proximity.

The cable asymmetry has become more visible as video calls, remote work, and content creation have grown. Fiber eliminates it entirely.

When You Load a Page

You type a URL and press Enter. First, DNS resolution: cache checks, then a resolver walks the hierarchy. Total time on a cache miss: 10 to 50 milliseconds. Second, TCP and TLS setup: three-way handshake plus certificate exchange. On a server 30 milliseconds away, setup takes 60 to 90 milliseconds. Third, the HTTP request and response: single-digit milliseconds for static content, more for dynamic. Fourth, resource loading: a typical page triggers 50 to 200 sub-requests for CSS, JavaScript, images, and fonts. Fifth, rendering: the browser assembles everything into pixels on your screen.

Total time from Enter to usable page: 500 to 2,000 milliseconds.

One constraint through this entire sequence is absolute: the speed of light. A server in London is at minimum 33 milliseconds away through fiber, each direction. No software optimization can reduce that. It is why CDNs distribute content to dozens of cities, and why latency is a first-class engineering concern.

As we move up the stack from hardware and networks toward software and AI, this physical grounding remains relevant. When an AI model responds to your query, the latency you experience is the sum of network round-trip time plus the time GPUs take to generate the response. The network floor is set by physics. The inference floor is set by the scale of the model and the hardware running it, topics this series will reach in the articles ahead.

The internet is not magic. It is light through glass, protocols built on decades of cooperative engineering, and a physical infrastructure that has been quietly accumulating on ocean floors and along highways for forty years. Understanding its mechanics explains the constraints you run into every day and the tradeoffs engineers make when building anything at scale.

T.

References

-

TeleGeography Submarine Cable Map - The authoritative interactive map of all active and planned submarine cable systems, maintained by TeleGeography. Used for submarine cable counts, capacity figures, and geographic routing context.

-

A Brief History of the Internet’s Biggest BGP Incidents (NANOG) - Survey of major BGP routing incidents from the Network Operators Group, covering hijacks, route leaks, and misconfigurations that caused widespread outages.

-

Vercara DNS Analysis Report (2024) - Monthly DNS traffic analysis from one of the largest authoritative DNS operators, providing the per-day query volume statistics cited here.

-

DE-CIX Traffic Statistics - Real-time and historical traffic data from the Frankfurt Internet Exchange, the world’s largest single-site IXP by peak throughput.

-

Orange Spain BGP Hijack Analysis (Security Boulevard, January 2024) - Technical postmortem on the January 2024 BGP hijack that took Orange Spain’s network offline.