Every time you watch a video, send an email, or ask an LLM a question, you flip a switch in a data center somewhere. That switch costs energy. A lot of it.

The internet feels weightless. You tap your phone and information appears. But behind that tap sits a physical infrastructure consuming more electricity than entire countries.

THE SCALE

Data centers consumed 460 terawatt-hours (TWh) globally in 2022. To put that in perspective, one terawatt-hour powers about 70,000 American homes for a year.

By 2026, the International Energy Agency projects consumption could exceed 1,000 TWh, more than double the 2022 baseline. That’s roughly equivalent to Japan’s entire electricity consumption.

The numbers get more stark when you zoom out. The internet now accounts for 1.5% to 4% of global greenhouse gas emissions. That share is climbing. Emissions related to the internet were at 3.7% in 2023. They could hit 5.5% by 2026.

What’s driving this surge? Three words: artificial intelligence, cryptocurrency, and streaming. These workloads don’t just use electricity. They devour it.

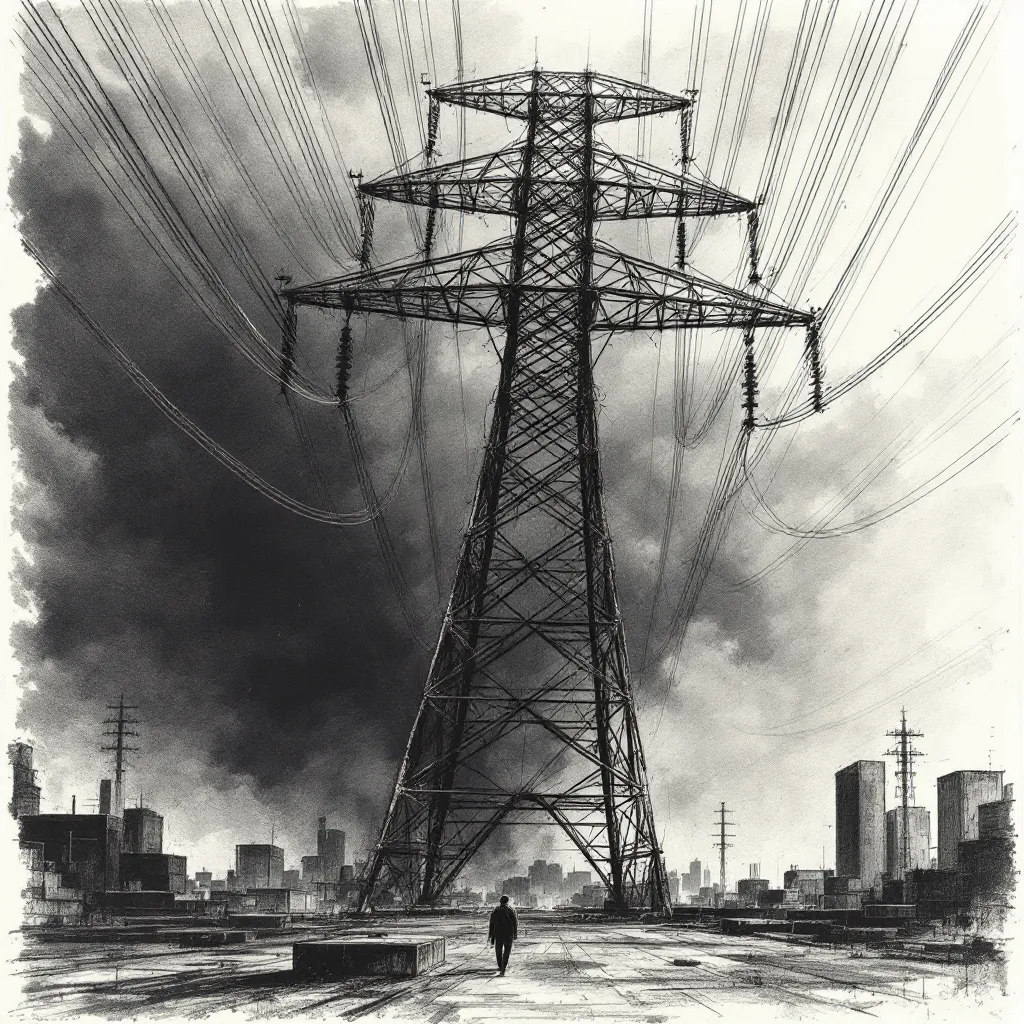

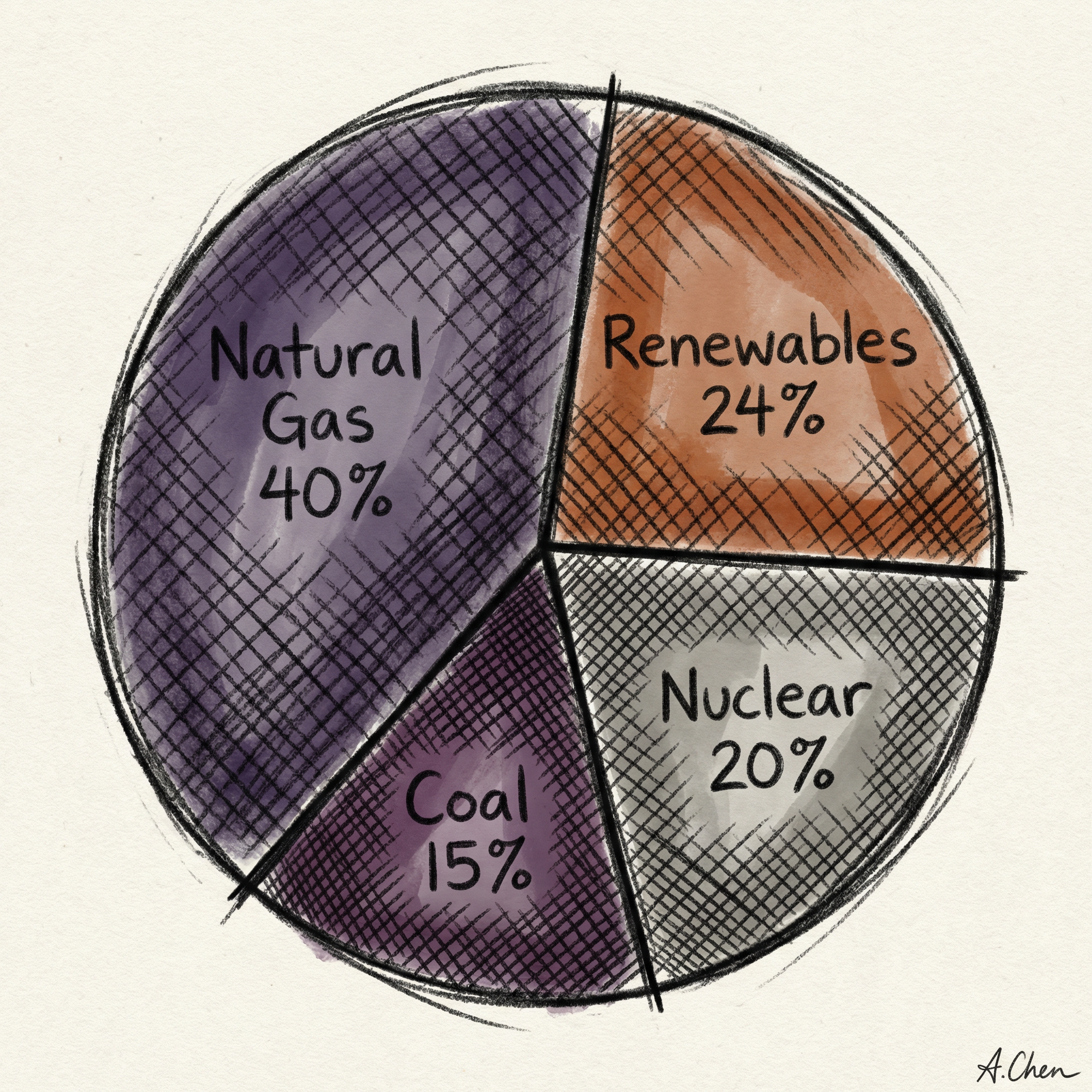

WHAT POWERS THE INTERNET

Walk into a modern data center and you’re walking into an industrial facility. Rows of servers hum 24/7. They’re powered by a mix of energy sources that might surprise you.

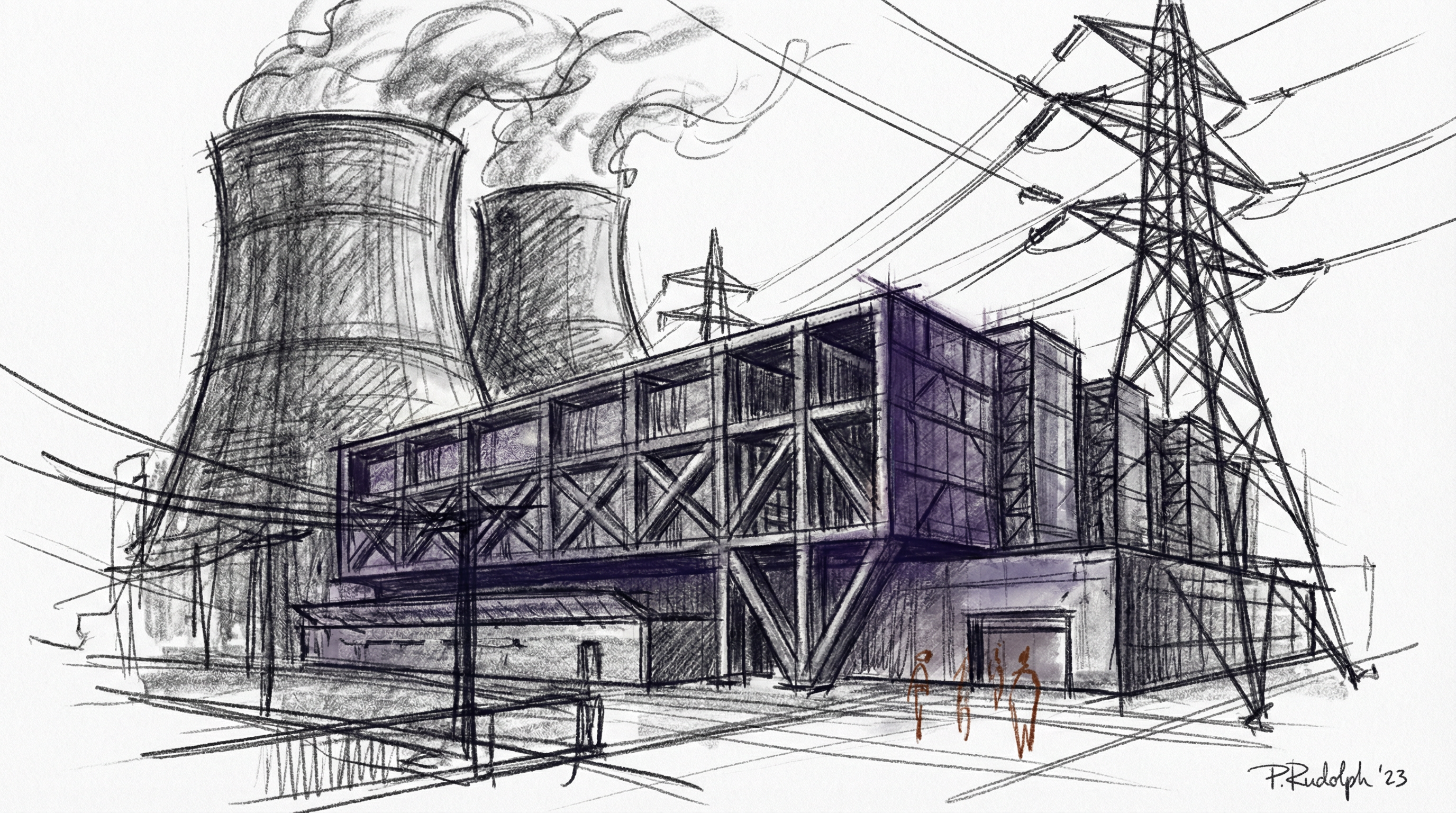

More than 40% of data centers run on natural gas. Solar and other renewables deliver 24%, nuclear contributes 20%. Coal still produces 15%.

That energy mix varies wildly by region. In China, coal dominates. In Iceland, geothermal and hydro power most facilities.

Natural gas leads because it’s reliable and relatively cheap. Flip the switch, and the power flows. Solar and wind only generate electricity when conditions are favorable. They need battery storage or backup sources to keep servers running around the clock.

Nuclear offers a different profile. Plants operate 24/7 with a capacity factor exceeding 92.5%. That’s far better than natural gas (56%), wind (35%), or solar (25%). That reliability explains why tech companies are eyeing nuclear.

Microsoft agreed to buy fusion power from Helion by 2028. They’re the first major tech firm to invest in fusion as a future energy source.

THE AI FACTOR

AI changed the equation. Training a single large language model can consume as much electricity as hundreds of American homes use in a year. Training GPT-3 in Microsoft’s data centers directly evaporated 700,000 liters of clean freshwater.

The scale is staggering. GPU-accelerated AI servers grew from less than 2 TWh in 2017 to more than 40 TWh in 2023. Projections suggest 165 to 326 TWh by 2028.

The IEA estimates AI-focused data center electricity demand is growing at 30% annually. That’s compared to 9% for conventional server workloads.

Training AI models involves thousands of graphics processing units running continuously for months. Nvidia’s H100 GPU is the workhorse. Each chip draws hundreds of watts under load. String together thousands of them and you need your own power plant.

Here’s the kicker: 80 to 90% of AI’s computing power now goes to inference, not training. Every time someone asks an AI model a question or generates an image, servers fire up. Billions of queries per day add up fast.

The good news? Efficiency is improving. Nvidia GPUs have advanced 45,000 times in energy efficiency over the last eight years for running large language models. New techniques can reduce training energy by 80%.

But the combination of rapidly growing model size and computing demand will likely outpace efficiency improvements. This results in net growth in total energy use.

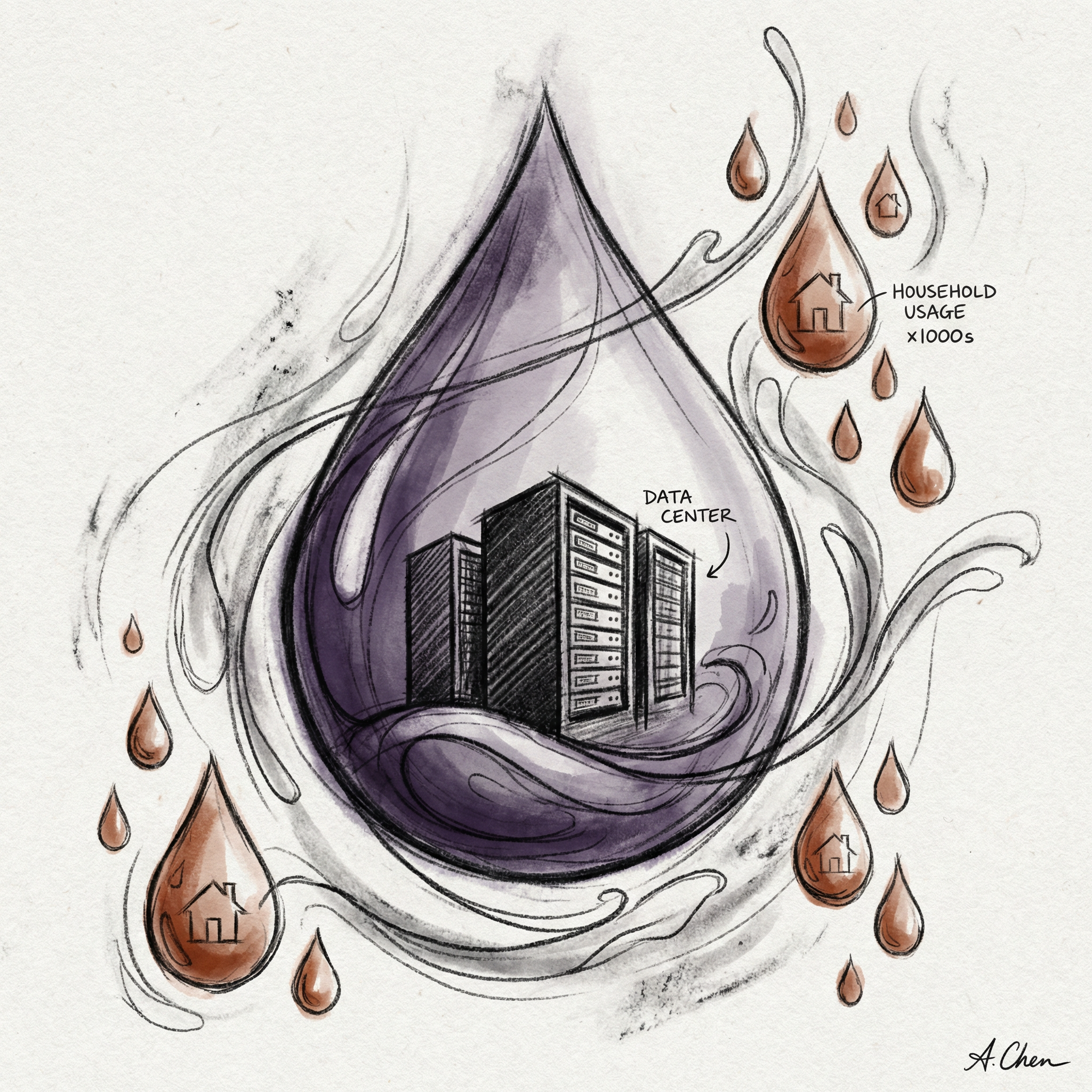

THE WATER PROBLEM

Energy isn’t the only resource data centers consume. They drink water. Lots of it.

A medium-sized data center can consume up to 110 million gallons of water per year, equivalent to 1,000 households. Larger facilities can consume up to 5 million gallons per day. That’s 1.8 billion gallons annually, enough for a town of 10,000 to 50,000 people.

The water cools servers. U.S. data centers directly consumed 21.2 billion liters in 2014 and 66 billion liters in 2023. Much of that water evaporates in the cooling process. It doesn’t return to rivers and streams.

Here’s the troubling part: developers have placed roughly two-thirds of data centers built since 2022 in water-stressed regions. Arizona and other dry climates host these facilities. Building cooling infrastructure where water is scarce creates obvious problems.

There’s also indirect water use. Electricity generation accounts for the vast majority of a data center’s water footprint. Power plants, especially coal and nuclear, use massive amounts of water for cooling. In the U.S., data centers have an indirect water footprint of about 800 billion liters.

New cooling technologies like direct-to-chip cooling and immersion cooling can reduce both water and energy usage. But there’s a tradeoff. Optimizing for energy efficiency can worsen water efficiency. Evaporative cooling uses less energy but it loses significant water as it evaporates.

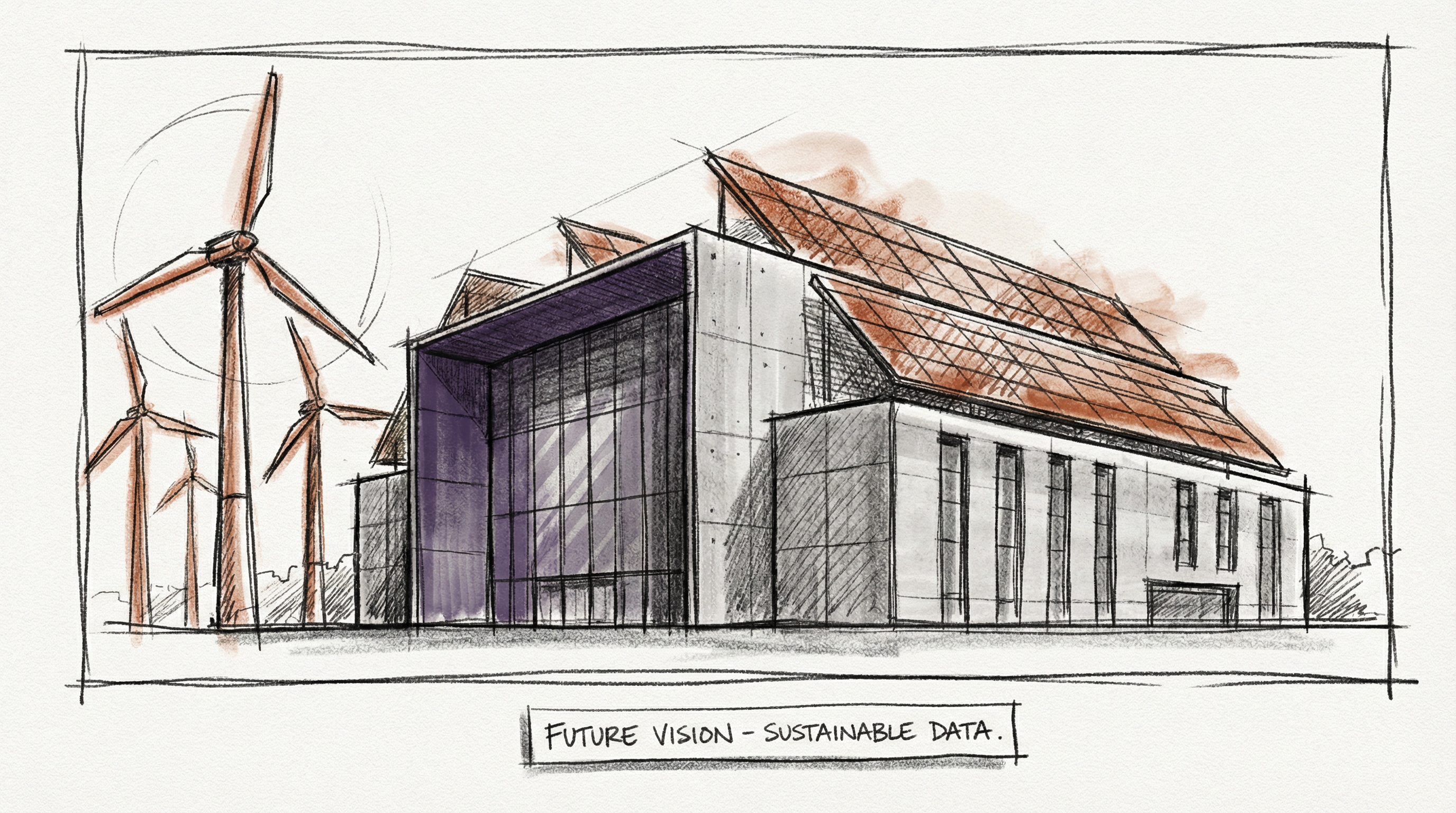

RENEWABLE HOPES

The big tech players know they have a problem. Google, Microsoft, and Amazon have all pledged to reach 100% renewable energy by 2030.

Google achieved carbon-free operations in 2020, becoming the first major cloud provider to operate carbon-free. The company’s commitment goes further. They aim to run on 24/7 carbon-free energy by 2030, matching each hour of electricity consumption with carbon-free energy on every grid where they operate. Google uses machine learning to optimize cooling, reducing energy for cooling by up to 40%.

Microsoft aims to become carbon negative by 2030, meaning they’ll remove more carbon than they emit. By 2050, they plan to remove all carbon emitted since their 1975 founding. That’s an ambitious promise.

Amazon Web Services hit 100% renewable energy in 2023, two years ahead of their 2025 target. But renewable energy doesn’t mean what you think. These companies use renewable energy credits and power purchase agreements. They’re not necessarily running servers on wind and solar in real time.

The challenge is timing. Data center demand growth is outpacing renewable energy infrastructure expansion. You can’t build wind farms and solar arrays overnight. Even with aggressive investment, renewables will meet only about half of new demand over the next five years. Natural gas, coal, and nuclear will fill the gap.

THE PATH FORWARD

Can we build a sustainable internet? The honest answer: it depends on what choices we make now. The internet’s emissions were 3.7% of global totals in 2023, rising to an estimated 5.5% by 2026. That trajectory is unsustainable. But there are paths to improvement.

First, efficiency gains matter. Techniques like immersion cooling and direct liquid-to-chip cooling can dramatically improve power usage effectiveness. Better GPUs help too. Nvidia’s 45,000-times efficiency improvement over eight years shows what’s possible.

Second, energy source matters. Nuclear offers 24/7 baseload power with zero carbon emissions. Small modular reactors could power data centers directly, eliminating transmission losses. Fusion remains experimental, but Microsoft’s 2028 fusion deal signals serious interest.

Third, we need honesty about tradeoffs. Streaming video, cryptocurrency mining, and AI all have costs. Bitcoin alone consumes 120 to 150 TWh annually, comparable to Poland’s entire electricity use. A single Bitcoin transaction emits as much CO₂ as 800,000 Visa transactions or 50,000 hours of YouTube. Those numbers should inform how we build digital systems.

The average internet user emits 229 kilograms of CO₂ per year from digital activity alone. Internet activities take up 40% of an individual’s carbon budget. That’s not negligible.

Building a sustainable internet requires acknowledging the physical reality behind the screen. Every click, every search, every AI query pulls electricity from somewhere. The question is whether that electricity comes from coal or sun. Whether it wastes water or conserves it. Whether we design systems for efficiency or convenience.

We can build data centers powered by clean energy. We can develop cooling systems that don’t drain rivers. We can optimize AI models to use less compute. All of this is technically possible.

The barrier isn’t technology. It’s whether we choose to prioritize sustainability over speed. Efficiency over expansion. Long-term thinking over quarterly growth.

The internet has no off switch. It will keep growing. The only question is what powers it.

T.

References

- Electricity 2024 - Data Centres and Data Transmission Networks - IEA’s comprehensive analysis of data center energy consumption trends and projections through 2026

- Land and Water Impacts of Data Centers - Lincoln Institute research on water consumption, freshwater evaporation from AI training, and the siting of data centers in water-stressed regions

- AI Energy Usage and Climate Footprint - MIT Technology Review’s breakdown of GPU-accelerated server growth and the inference vs. training energy split

- How Hyperscalers Are Powering Their Data Centers - Yale Clean Energy Forum analysis of Google, Microsoft, and Amazon renewable energy strategies including fusion investments

- Nuclear Energy for Powering Data Centers - Deloitte’s assessment of nuclear capacity factors and the case for small modular reactors powering compute infrastructure