There is no cloud. There are just buildings full of computers.

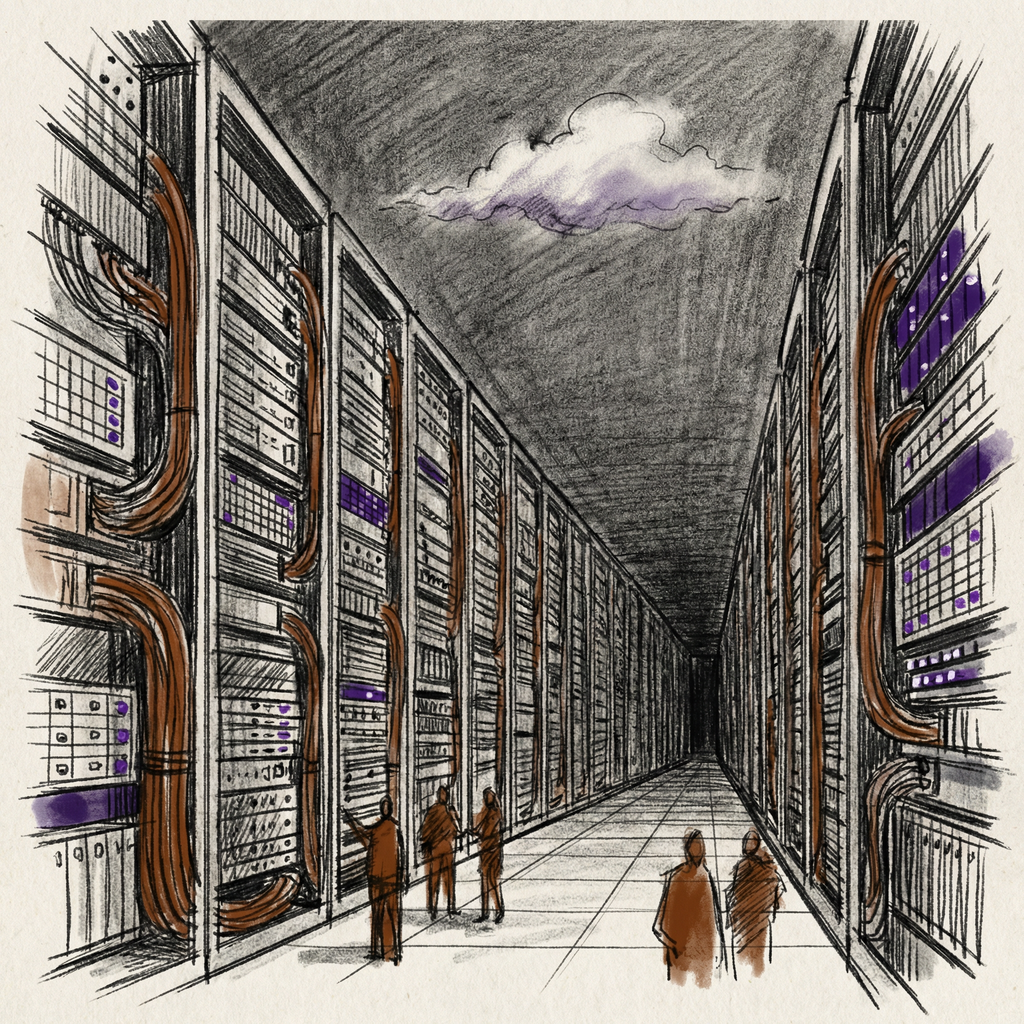

When you send an email, stream a movie, or ask an AI a question, your request travels through fiber optic cables to a specific physical building. Inside that building: rows upon rows of metal racks, each packed with servers, blinking LEDs, and enough cabling to wire a small town. The noise is loud enough to require earplugs. The air is either freezing cold or uncomfortably warm, depending on which aisle you stand in.

This is the cloud. Not a metaphor. Not an abstraction. A warehouse full of computers consuming enough electricity to power a small city.

Article 4 in this series explained how processors think. This article explains where they live. And why the buildings that house them are becoming one of the most important, expensive, and energy-hungry pieces of infrastructure on the planet.

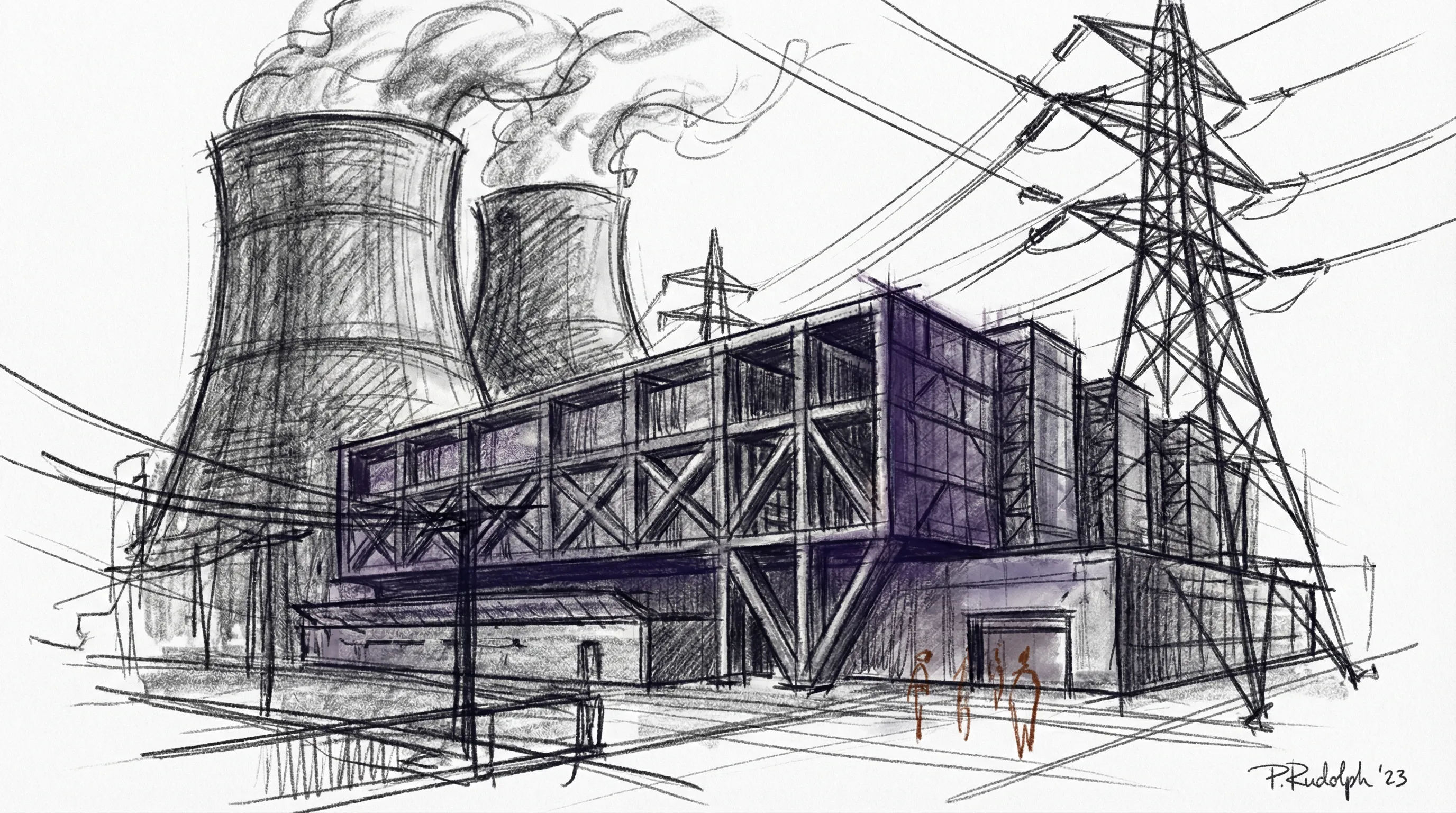

What a Data Center Actually Looks Like

From the outside, a hyperscale data center looks like a massive, windowless warehouse. Think of a building the size of several football fields, surrounded by security fencing, vehicle barriers, and biometric checkpoints. You will not walk in without authorization.

Inside, the first area looks like a regular office. Desks, monitors, people working. But pass through the next security door and the environment changes completely.

The server floor stretches out in long corridors of identical metal cabinets, each about seven feet tall. These are racks, and each one holds 20 to 40 individual servers stacked vertically. Overhead, bundles of cables run in organized trays, often color-coded: blue for network, yellow for fiber, red for power.

Builders raise the floor about two feet off the ground. Underneath runs a network of power cables and cooling infrastructure. Cool air pushes up through perforated floor tiles into “cold aisles” where server fronts face.

Servers pull this cool air through their components and exhaust hot air out the back into “hot aisles.” Mixing these airflows wastes energy, so modern facilities use physical barriers to keep hot and cold air separated.

A single hyperscale data center can house 50,000 to 100,000 servers. Amazon operates over 900 data center locations worldwide. Microsoft runs 131 with another 111 under construction. Google has 39 cloud regions with 118 availability zones.

Together, these three companies control about 60% of all hyperscale capacity on Earth.

The Power Problem

Every server needs electricity. Every server generates heat. And the amount of both is growing fast.

A typical Google search uses about 0.3 watt-hours of electricity. A ChatGPT request uses about 2.9 watt-hours.

Nearly ten times more power for a single AI query.

Nine billion Google searches happen daily. If AI queries reach that volume, the electricity required jumps from roughly 2.7 terawatt-hours per year to over 26 terawatt-hours.

More than many countries consume.

Industry analysts project global data center electricity consumption will hit 1,000 terawatt-hours in 2026. That equals Japan’s entire annual power consumption.

In the United States alone, data centers already consume 6% of all electricity. Goldman Sachs predicts a 165% increase in data center power demand by 2030 compared to 2023 levels.

This is not hypothetical. In Virginia’s “Data Center Alley,” the concentration of facilities already strains the local power grid. Utilities have delayed new data center projects because the electrical capacity simply does not exist yet.

The reason for this surge has a name: artificial intelligence. AI training and inference workloads grow at 30% per year, three times faster than conventional server demand. A single AI training cluster can consume 10 to 100 megawatts of power. That is enough to power 10,000 to 100,000 homes.

Think of data center power like a hospital’s electrical system. A hospital cannot tolerate even a few seconds without power because lives depend on it. Data centers face the same constraint because digital services depend on it.

So they build in layers of protection: redundant utility feeds from separate substations, massive battery banks that kick in within milliseconds, diesel generators that can run for days.

A Tier IV facility has fully redundant power paths. You can take any single component offline for maintenance and nothing goes down.

Keeping It Cool

Servers generate heat. A lot of heat. Without active cooling, temperatures inside a rack can reach damaging levels within minutes.

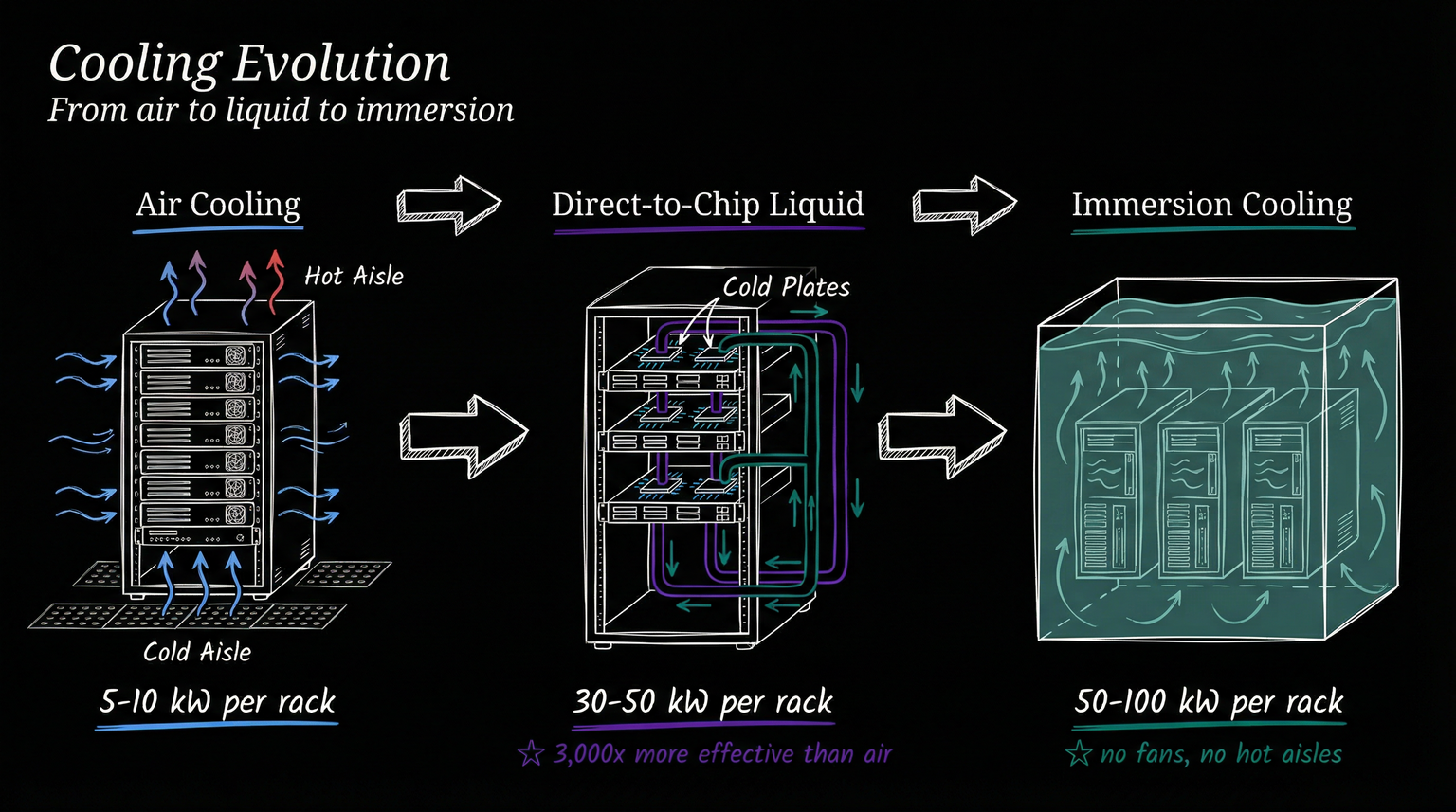

Traditional data centers use a system similar to air conditioning. Computer Room Air Conditioning (CRAC) units push cold air under the raised floor. The air rises through perforated tiles, flows through servers, and gets collected from the hot aisles. Chillers and cooling towers on the roof reject the heat to the outside environment.

This works fine for traditional workloads at 5 to 10 kilowatts per rack. But AI servers packed with GPUs pull 30 to 100 kilowatts per rack. Air cannot remove heat fast enough at those densities.

The industry’s answer: liquid cooling.

Think of it like the difference between blowing on hot soup and dunking it in an ice bath. Air moves heat slowly. Liquid moves it roughly 3,000 times more effectively.

Direct-to-chip cooling attaches metal cold plates directly to processors. Coolant flows through pipes, absorbing heat right at the source before it spreads into the surrounding air. This is the most common approach for new AI installations.

Immersion cooling takes it further. Engineers submerge servers entirely in a bath of non-conductive fluid. The fluid absorbs heat across every component surface simultaneously.

No fans needed. No hot aisles. The servers sit quietly in what looks like a fish tank.

Liquid cooling reduces cooling-related energy consumption by 50 to 60 percent. It also enables much denser server configurations, packing more compute power into less floor space.

The tradeoff: retrofitting existing air-cooled facilities is expensive and complex, and the industry is still standardizing connectors, fluid types, and maintenance procedures.

Water usage is another concern. Large air-cooled data centers can consume millions of gallons of water daily for evaporative cooling. Some regions with water scarcity are now requiring dry or hybrid cooling systems, pushing operators toward solutions that use no water at all.

The Reliability Machine

The Uptime Institute classifies data centers by reliability using a four-tier system.

Tier I has a single path for power and cooling with no redundancy. Expected uptime: 99.671%, or about 29 hours of downtime per year. Fine for a development server, not acceptable for production.

Tier II adds some redundant components. Uptime: 99.741%.

Tier III introduces multiple active paths for power and cooling. You can maintain any component without taking the facility offline.

Uptime: 99.982%, about 1.6 hours of downtime per year.

Tier IV makes everything fully redundant. Two completely independent power paths, two independent cooling systems. Any single component can fail and the facility keeps running.

Uptime: 99.995%, or roughly 26 minutes of downtime per year.

Most hyperscale operators build Tier III or IV. When millions of users depend on your services, even 26 minutes of annual downtime is a lot.

Amazon, Google, and Microsoft go beyond the tier system with geographic distribution. They replicate data across multiple facilities so that an entire data center can go offline without users noticing.

The Network Underneath

A data center full of servers is useless without a network connecting them to each other and to the rest of the internet.

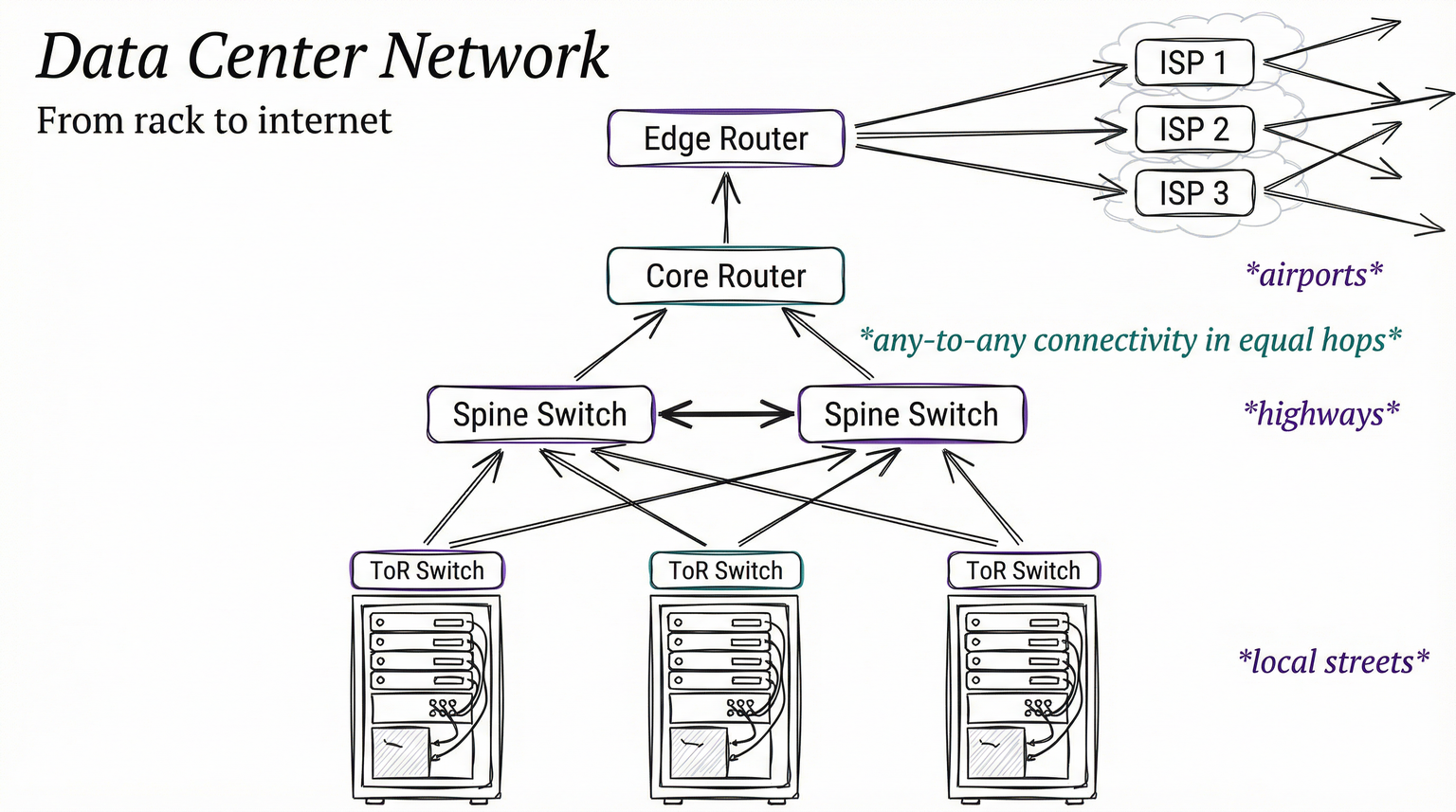

Inside the facility, the network uses a spine-and-leaf architecture. Each rack has a top-of-rack switch connecting all its servers. These leaf switches connect upward to spine switches that provide any-to-any connectivity across the building.

The design ensures that traffic between any two servers crosses the same number of switches, giving predictable, low-latency performance.

Think of it like a city’s transportation system. Local streets connect houses to arterial roads. Arterial roads connect to highways. In a data center, rack switches are local streets, spine switches are highways, and edge routers are the airports connecting to the wider internet.

Edge routers connect the data center to multiple internet service providers through 100+ gigabit-per-second links. Using multiple providers ensures that if one connection fails, traffic reroutes through another.

The big three hyperscalers go further: they build their own private fiber networks, including undersea cables that carry 98% of all international internet traffic.

The Future: Up

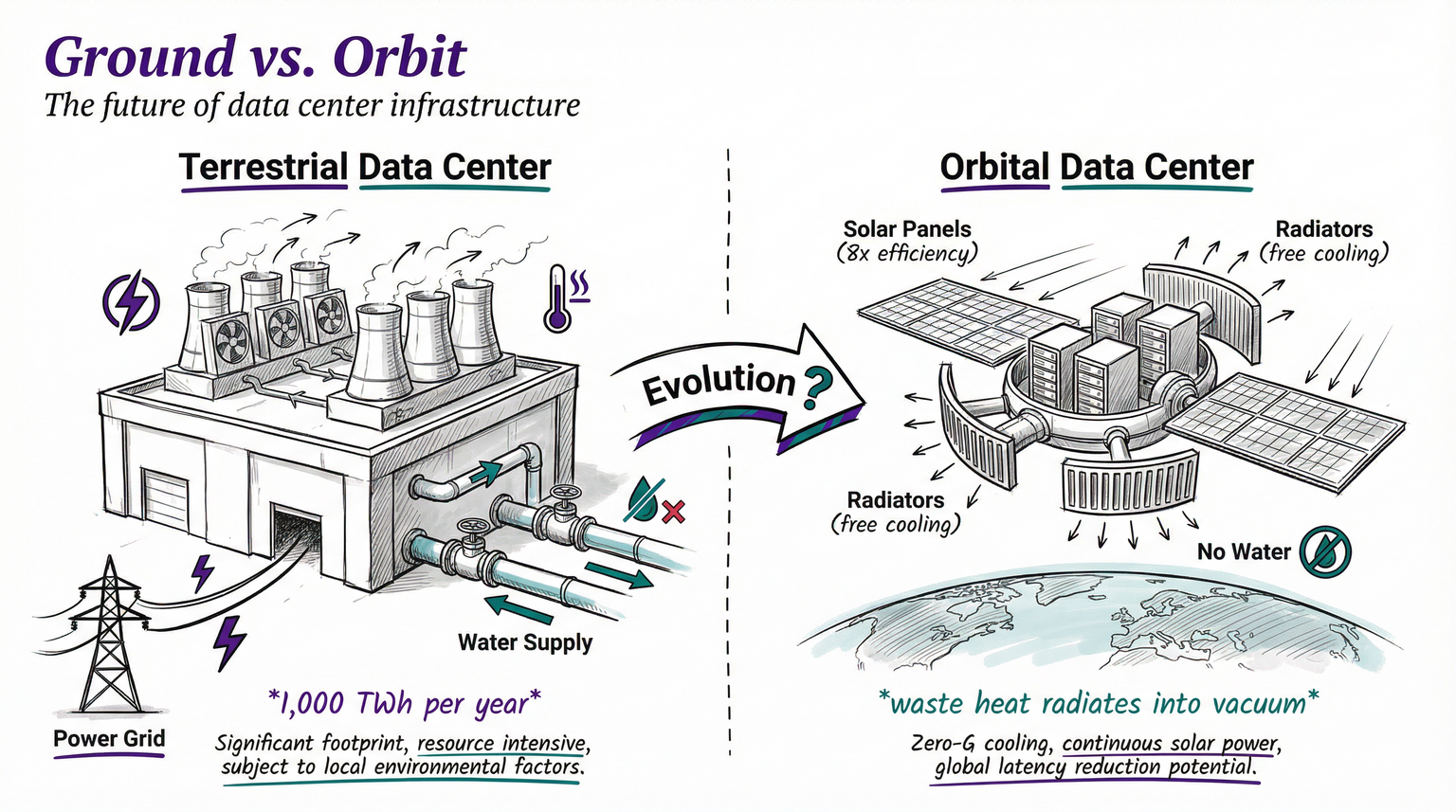

What happens when you run out of land, power, and water for data centers?

You go to space.

This is not science fiction. In 2025, a company called Starcloud deployed an NVIDIA H100-class system in orbit and became the first company to train a large language model in space. They also ran Google’s Gemini model on orbital hardware.

The logic is straightforward. In space, solar panels produce electricity roughly 8 times more efficiently than on Earth’s surface. No atmosphere, no weather, no nighttime in certain orbits.

Cooling becomes free: waste heat radiates directly into the vacuum as infrared radiation. No water needed. No cooling towers. No chillers.

Starcloud plans a 5-gigawatt orbital facility with solar and cooling panels spanning roughly 4 by 4 kilometers. They estimate energy costs could be 10 times cheaper than terrestrial alternatives once you account for launch expenses.

Google has its own research project, Suncatcher, studying the engineering requirements for orbital computing. Their analysis suggests that launch costs need to drop below $200 per kilogram for space-based data centers to become economically competitive. Current costs are approaching that threshold as SpaceX and others drive down launch prices.

But the environmental math is not settled. Researchers at Saarland University found that when you account for rocket launch emissions, atmospheric reentry of hardware, and radiation shielding mass, space data centers could produce more carbon emissions than ground-based ones.

The rockets burn enormous amounts of fuel. Hardware needs replacing more often due to radiation damage. The massive radiator panels needed to dump heat into space add significant launch weight.

Early applications will likely focus on tasks where orbital location provides a direct advantage: Earth observation, wildfire detection, crop monitoring, weather prediction. Response times for these applications drop from hours to minutes when the processing happens in orbit rather than on the ground.

And Beyond That?

Here is where speculation gets interesting.

Some futurists estimate that digitizing a single human consciousness would require roughly 2.5 petabytes of storage and processing speeds of 10 quadrillion floating-point operations per second. For context, the most powerful supercomputer in 2026 approaches that processing threshold. The storage is trivial by hyperscale standards.

The question is not whether the hardware could eventually support it. The question is power.

Running a single uploaded mind at current efficiency levels would consume more electricity than a small town. Running millions of them would dwarf current global energy production.

This is where the threads connect. The same infrastructure trajectory that is pushing data centers into orbit for AI workloads could, decades from now, be the foundation for something much stranger. The buildings we build today to train language models might be prototypes for facilities that house digital minds.

Or they might just keep running spreadsheets and streaming cat videos. The infrastructure does not care. It provides compute, storage, and networking. What we do with it is up to us.

The Physical Cost of Digital Life

Every email, every search, every AI conversation has a physical cost. A specific amount of electricity consumed, a specific amount of heat generated, a specific building in a specific location. Real people, real cables, real water or fluid or, eventually, the real vacuum of space.

The cloud is not a cloud. It is the fastest-growing category of physical infrastructure on the planet, with over $3 trillion in projected investment through 2030.

It is 1,000 terawatt-hours of annual electricity consumption and growing. It is the single largest driver of new power demand in the developed world.

Understanding where your data lives and what it costs to keep it alive matters.

The decisions about data center power, cooling, and location happening right now will shape everything from AI capabilities to climate commitments for decades to come.

T.

References

-

Higher Loads, Faster Builds: 6 Data Center Trends in 2026 - Industry overview of 2026 data center construction trends including power density, cooling evolution, and build timelines

-

Data Center Market Size to Reach $430.18 Bn in 2026 - Global market valuation, growth projections, and hyperscaler capital expenditure figures

-

Why Liquid Cooling Is Becoming the Data Center Standard - Technical analysis of the shift from air cooling to direct-to-chip and immersion cooling for AI workloads

-

Data Center Redundancy: N, N+1, 2N, and 2N+1 Explained - Uptime Institute tier classifications and redundancy configurations for critical infrastructure

-

Spine-and-leaf Network Architecture Explained - How modern data center networking fabric replaces traditional three-tier designs

-

U.S. Data Center Development Cost Guide - Per-megawatt construction costs across 19 U.S. markets and AI-ready facility premiums

-

Moody’s Sees $3T in Data Center Spending by 2030 - Long-term capital expenditure projections for the global data center construction boom