A single transistor does almost nothing. It switches on or off. One or zero. That’s it.

But combine two transistors and you get a logic gate that makes decisions. Combine thousands of logic gates and you get a circuit that does arithmetic. Combine billions of arithmetic circuits and you get a processor that can run a large language model.

Article 3 explained how silicon becomes a transistor. This article explains how transistors become a thinking machine. And why the specific way you organize those transistors determines whether you get a brilliant generalist or an army of specialists.

That distinction, between CPUs and GPUs, is the reason AI went from academic curiosity to a technology that changes industries.

From Transistors to Logic

A transistor is a switch. But switches become powerful when you combine them.

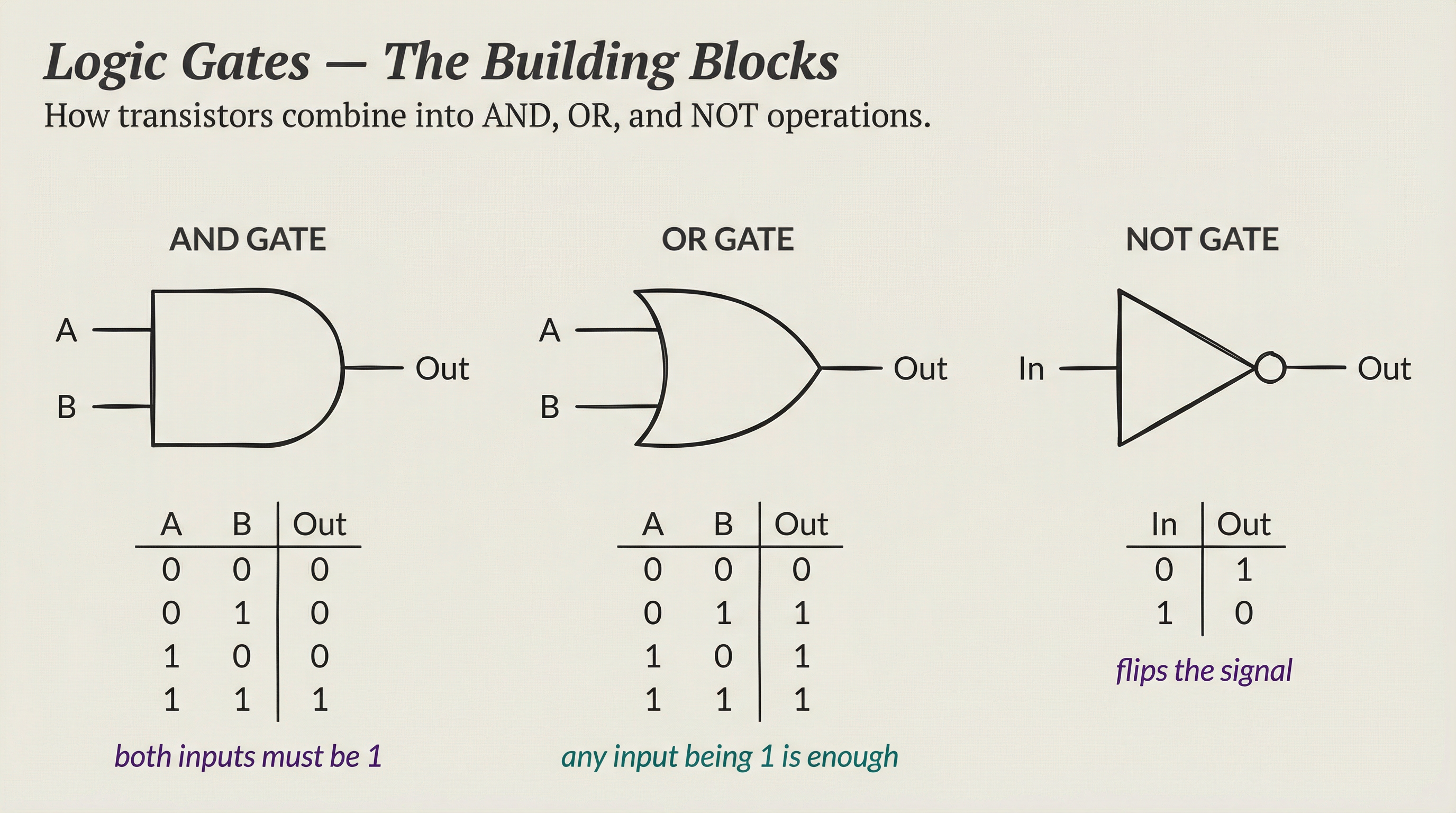

Take two transistors and wire them in series. Both must be “on” for current to flow through. That’s an AND gate. It only outputs 1 if both inputs are 1.

Wire two transistors in parallel instead. Current flows if either one is on. That’s an OR gate. It outputs 1 if any input is 1.

Add a transistor that inverts its input. On becomes off, off becomes on. That’s a NOT gate.

AND, OR, NOT. Three operations. That’s the entire foundation of digital logic.

Every calculation your computer performs, every pixel it renders, every word it predicts, reduces to combinations of these three operations. The math that runs neural networks is AND, OR, and NOT gates firing billions of times per second.

Logic Gates to Math

Logic gates combine into larger structures. The most important one for understanding processors is the adder.

A half adder uses two gates to add two single-bit numbers. A full adder handles carry bits. Chain 64 full adders together and you can add two 64-bit numbers in a single operation.

Build subtraction from addition (flip bits and add one). Build multiplication from repeated addition. Build division from repeated subtraction.

An Arithmetic Logic Unit (ALU) packages all these operations into a single component. Give it two numbers and an instruction (add, subtract, multiply, compare), and it produces a result.

A modern CPU core contains several ALUs. A modern GPU contains thousands.

The CPU: Master Chef

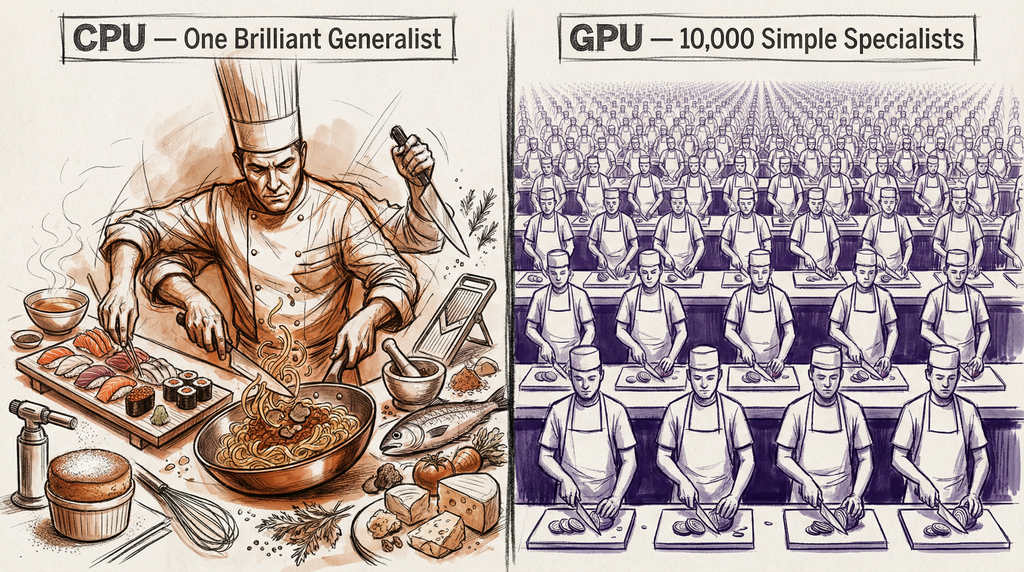

Think of a CPU like a master chef in a high-end restaurant.

The chef can make anything. Sushi, pasta, soufflé, molecular gastronomy. Each dish requires different techniques, different timing, different ingredients. The chef handles it all, switching between tasks, making judgment calls, adapting when something goes wrong.

But the chef works mostly alone. One dish at a time, start to finish. Incredibly versatile, but limited by being a single person.

That’s a CPU. It handles any type of computation, but it processes instructions mostly one at a time per core.

How a CPU Works

A CPU executes instructions through a cycle: fetch, decode, execute, store.

- Fetch: Read the next instruction from memory

- Decode: Figure out what the instruction means (add? multiply? load data?)

- Execute: The ALU performs the operation

- Store: Write the result back to memory or a register

Modern CPUs run this cycle billions of times per second. An Intel Core i9-14900K has a clock speed of 6.0 GHz, meaning it ticks 6 billion times per second. Each tick can advance one or more instructions through the pipeline.

Making CPUs Faster

Engineers squeeze more performance from CPUs through several tricks:

Clock speed is the obvious one. Faster ticks mean more instructions per second. But clock speed hit a wall around 2005 at roughly 4-5 GHz. Push higher and the chip generates too much heat.

The laws of physics set the ceiling.

Pipelining overlaps the fetch-decode-execute-store stages. While one instruction executes, the next one decodes, and the one after that fetches. Like an assembly line within the chef’s kitchen.

Branch prediction guesses which instruction comes next. If the CPU guesses right (and modern predictors guess right 95-99% of the time), it saves the cost of waiting. If wrong, it throws away the speculative work and starts over.

Multiple cores put several CPUs on one chip. A modern desktop processor has 8-24 cores. Each core is an independent chef, working on its own task.

The Intel Core i9-14900K has 24 cores (8 performance, 16 efficiency). Apple’s M3 Ultra has 24 cores.

But even with all these tricks, CPUs excel at complex sequential work. They handle branching, conditional logic, and unpredictable data patterns well. When you need to run an operating system, parse a document, or execute business logic, a CPU is the right tool.

What they don’t do well is the same simple operation on millions of data points simultaneously.

The GPU: Assembly Line

Now picture a different kitchen. Instead of one master chef, you have 10,000 line cooks. Each one can only do one thing: chop vegetables, or flip burgers, or plate dishes.

No creativity, no improvisation. But give them all the same instruction and they execute it simultaneously on different ingredients.

Need to chop 10,000 onions? The master chef takes hours. The 10,000 line cooks take seconds.

That’s a GPU.

How GPUs Differ

GPUs were born to solve a specific problem: rendering pixels on a screen.

A 4K display has 8.3 million pixels. Each frame, the computer calculates the color of every single pixel. The math for each pixel is simple: multiply some vectors, apply lighting, blend colors.

But you need to do it 8.3 million times, 60 times per second. A CPU would handle each pixel sequentially. Way too slow.

A GPU handles thousands of pixels simultaneously by running the same calculation across many cores at once. Engineers call this approach SIMD: Single Instruction, Multiple Data. One instruction (“multiply these vectors”), applied to thousands of different data points in parallel.

GPU Architecture

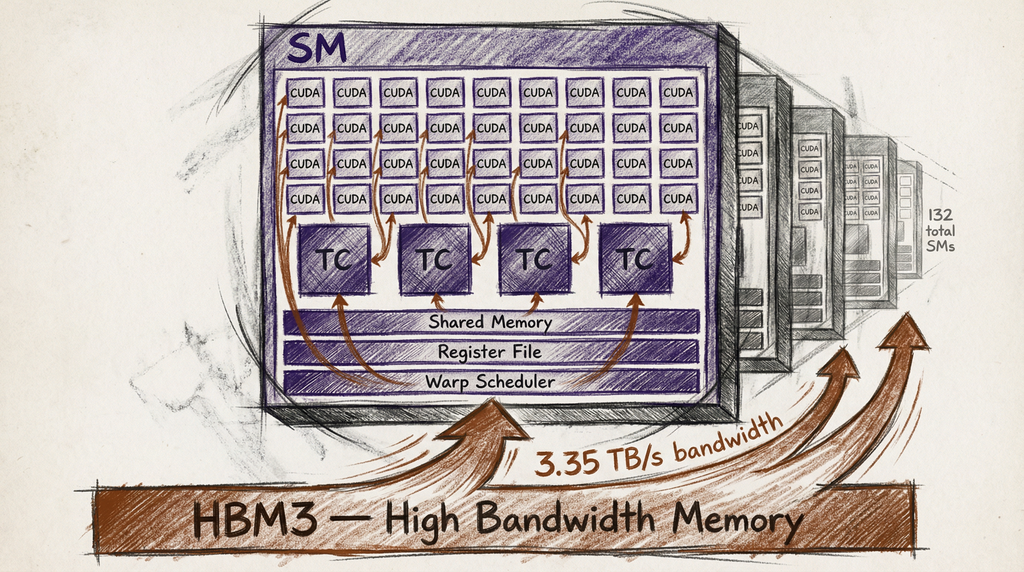

An NVIDIA H100 GPU has 16,896 CUDA cores. Each core is simple, much simpler than a CPU core. But when all 16,896 execute the same instruction on different data, the throughput is massive.

NVIDIA organizes the cores into Streaming Multiprocessors (SMs). The H100 has 132 SMs, each containing 128 CUDA cores. Think of an SM as a team of line cooks with a shared prep station. The SM manages scheduling, memory access, and coordination for its group of cores.

Modern AI GPUs add Tensor Cores, specialized circuits built specifically for matrix multiplication. The H100 has 528 Tensor Cores. These handle the core math operation of neural networks: multiplying large matrices of numbers. A single Tensor Core performs a 4x4 matrix multiply-add in one clock cycle, an operation that would take a CUDA core dozens of cycles.

The NVIDIA B200, released in 2024, pushes further: 208 billion transistors, 18,432 CUDA cores, and next-generation Tensor Cores that deliver roughly 2.5x the AI performance of the H100.

Why GPUs Dominate AI

Neural network training is matrix multiplication at massive scale.

When you train a large language model, the core operation is: take a matrix of input values, multiply it by a matrix of weights, add biases, apply an activation function, repeat. Billions of times.

Each individual multiplication is simple. But you do the same operation across millions of parameters simultaneously. This is the exact pattern GPUs handle best.

Here’s the math. A single layer of a transformer model might multiply a 4,096 x 4,096 matrix by a 4,096 x 1 vector. That’s about 16.8 million multiply-add operations.

A large model has hundreds of these layers. Training requires running this forward pass millions of times, plus backpropagation.

A CPU processes these operations mostly one at a time. A GPU processes thousands in parallel. For matrix multiplication specifically, a GPU can be 100-1,000x faster than a CPU.

| Task | CPU (sequential) | GPU (parallel) |

|---|---|---|

| One complex calculation | Fast | Slow (overhead) |

| Branching logic | Excellent | Poor |

| 10,000 identical calculations | Minutes | Seconds |

| Matrix multiplication (AI) | Hours | Minutes |

That’s why NVIDIA went from a gaming company to the third most valuable company in the world. Their hardware matches the mathematical structure of AI.

The Memory Problem

Raw computation isn’t enough. You also need to feed data to the cores fast enough.

Think of it this way. Your 10,000 line cooks are ready to chop onions, but there’s only one small door to the pantry. Cooks spend most of their time waiting for onions instead of chopping.

This is the memory bandwidth bottleneck. The GPU can compute faster than memory can deliver data.

The H100 uses HBM3 (High Bandwidth Memory), a stack of memory chips physically mounted on the same package as the GPU. This gives it 3.35 TB/s of memory bandwidth, about 7x what a typical CPU gets.

The B200 pushes to HBM3e with 8 TB/s bandwidth. Each generation, memory bandwidth grows because it’s often the real bottleneck, not computation.

GPU memory capacity matters too. The H100 has 80 GB of HBM3. A large language model with 70 billion parameters needs about 140 GB just to store the weights (at 16-bit precision).

That means you need at least two H100s just to hold the model, never mind running it. This is why you see GPU clusters with thousands of GPUs connected by high-speed interconnects like NVLink. No single GPU is big enough for frontier AI models.

Beyond GPUs: Specialized AI Chips

Engineers originally built GPUs for gaming, not AI. Several companies now design chips specifically for AI workloads.

Google TPUs (Tensor Processing Units) target TensorFlow workloads specifically. TPU v5e focuses on inference efficiency. Google uses them internally and offers them through Google Cloud.

TPUs sacrifice general-purpose flexibility for higher efficiency on specific AI operations.

AWS Trainium is Amazon’s custom training chip. Trainium2, launched in late 2024, targets cost-effective large-scale training. Amazon designed it to reduce dependence on NVIDIA.

Apple Neural Engine ships inside every M-series chip. It handles on-device AI tasks (Siri, photo processing, text prediction) at low power. The M3 Ultra’s Neural Engine runs at 31 TOPS (trillion operations per second).

Cerebras WSE-3 takes a different approach entirely. Instead of a chip the size of your thumbnail, it’s a chip the size of a dinner plate. One massive wafer-scale chip with 4 trillion transistors and 900,000 cores. No interconnect bottlenecks because everything is on one piece of silicon.

The trend is clear: general-purpose GPUs handle exploration and flexibility. Once you know exactly what computation you need, specialized chips do it more efficiently.

Performance Per Watt: The Real Metric

Raw speed doesn’t matter if a chip melts or bankrupts your power budget.

The H100 draws 700W and delivers roughly 990 teraflops of AI performance (FP16). That’s about 1.4 teraflops per watt.

The B200 draws 1,000W but delivers roughly 2,500 teraflops. That’s 2.5 teraflops per watt, nearly double the efficiency.

Each generation improves performance-per-watt more than raw performance. This matters because data centers have fixed power budgets. A data center allocated 100 megawatts can’t just add more GPUs indefinitely. The chip that does more work per watt wins.

This is why the GPU spec sheet matters beyond marketing. When NVIDIA announces a new chip, the number that determines AI infrastructure planning is performance-per-watt, not peak teraflops.

Why This Matters

If you work with AI, understanding processors explains the constraints you bump into daily.

Why training is expensive: Training a frontier model requires thousands of GPUs running for months. At $2-3 per GPU-hour for H100s, the electricity and hardware costs reach tens of millions of dollars.

Why inference has latency: When you chat with an AI, each response requires your prompt to flow through hundreds of matrix multiplications. GPU speed and memory bandwidth determine how fast that happens.

Why NVIDIA matters so much: They control 80%+ of the AI chip market. Their CUDA software ecosystem, built over 15 years, creates massive switching costs. Migrating AI code from CUDA to another platform takes significant engineering effort.

Why model architecture matters: Techniques like quantization (using fewer bits per parameter) and distillation (training smaller models to mimic larger ones) reduce the computational load. These aren’t algorithmic tricks. They’re direct responses to hardware constraints.

The processor defines what’s computationally possible. The GPU made AI practical by matching silicon architecture to the mathematical structure of neural networks.

What’s Next

We’ve gone from electricity to semiconductors to transistors becoming processors. But processors need a home.

In the next article, we’ll look at where all these GPUs actually live: data centers. Thousands of processors packed into racks, connected by high-speed networks, cooled by systems that consume as much power as the computers themselves.

The “cloud” isn’t a metaphor. It’s a factory floor.

References

-

NVIDIA H100 Tensor Core GPU Architecture - Official specifications for the H100 GPU including CUDA core counts and memory bandwidth

-

NVIDIA Blackwell Architecture - B200 GPU specifications and performance comparisons

-

How CPUs Work - Branch Education - Visual explanation of the fetch-decode-execute cycle

-

Google TPU Documentation - Overview of Tensor Processing Unit architecture and use cases

-

Cerebras WSE-3 Wafer-Scale Engine - Specifications for the wafer-scale AI chip approach